Edge AI for Machine Learning: Achieving 30% Faster Inferences by 2026

Edge AI for Machine Learning revolutionizes real-time data processing by deploying AI models directly on devices, promising up to 30% faster inferences by 2026 through optimized algorithms and specialized hardware, enhancing privacy and reducing latency.

The landscape of artificial intelligence is rapidly evolving, pushing the boundaries of what’s possible directly on our devices. By 2026, the adoption of Edge AI for Machine Learning is projected to achieve a remarkable 30% acceleration in real-time inferences, fundamentally transforming how we interact with technology. This shift from cloud-centric AI to on-device processing promises not only speed but also enhanced privacy and reliability.

Understanding Edge AI: Beyond the Cloud

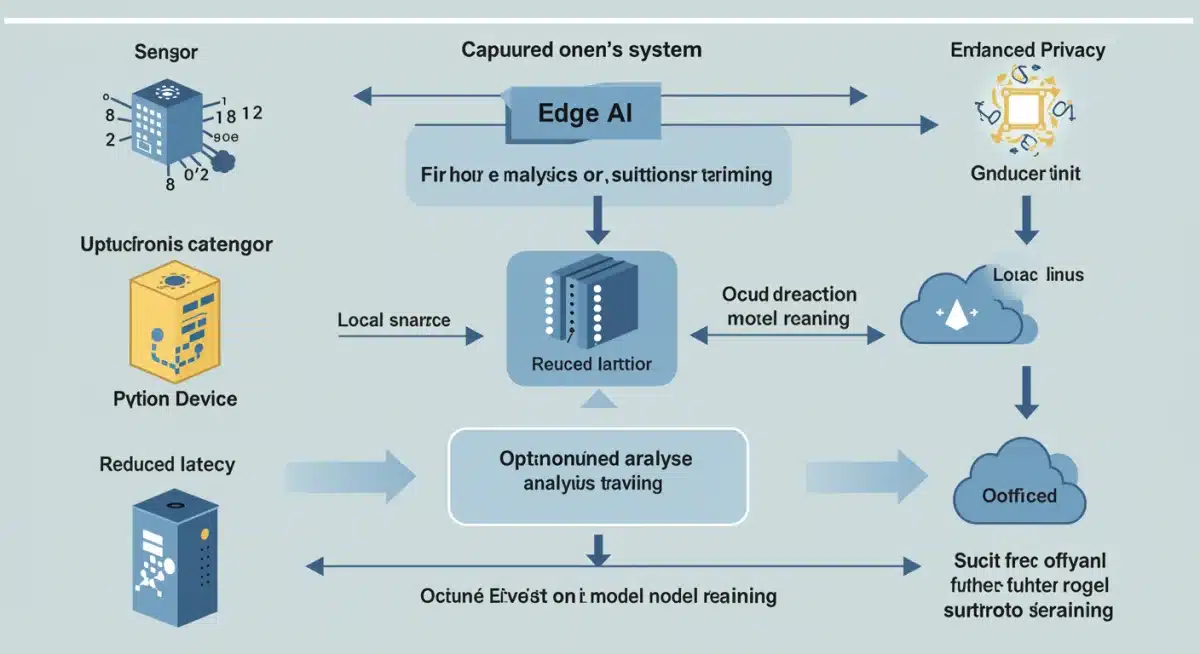

Edge AI represents a paradigm shift where AI computations, particularly machine learning inference, are performed on local devices rather than being offloaded to centralized cloud servers. This approach minimizes latency, reduces bandwidth consumption, and often enhances data privacy and security. The implications for various industries are vast, from autonomous vehicles to smart manufacturing.

The core principle behind Edge AI is bringing computation closer to the data source. Instead of sending raw data to a distant data center for processing, the intelligence resides on the device itself. This decentralization of AI is crucial for applications requiring immediate decision-making and those operating in environments with limited or intermittent connectivity.

The Latency Advantage

One of the most compelling benefits of Edge AI is the dramatic reduction in latency. When data travels to the cloud and back, even at light speed, there’s an inherent delay. For critical applications, this delay can be unacceptable.

- Real-time Decision Making: Enables instant responses in autonomous systems, medical diagnostics, and industrial automation.

- Faster User Experience: Improves responsiveness in consumer electronics, such as smart assistants and augmented reality applications.

- Reduced Network Load: Decreases the amount of data transferred over networks, freeing up bandwidth.

- Enhanced Reliability: Systems can operate independently of network connectivity, crucial for remote or critical infrastructure.

In essence, Edge AI empowers devices to act intelligently and autonomously, making decisions without external intervention. This capability is not just about speed; it’s about creating more robust, efficient, and secure AI-powered systems that can operate effectively in diverse and challenging environments. The move towards distributed intelligence is a foundational step in the next generation of AI applications.

Practical Solutions for On-Device Model Deployment

Deploying machine learning models directly onto edge devices requires a strategic approach, encompassing model optimization, hardware selection, and robust deployment frameworks. The goal is to maximize performance within the constraints of device resources, ensuring efficient and fast inference at the edge.

One of the primary challenges is adapting models, often trained on powerful cloud infrastructure, to run effectively on resource-constrained devices. This involves a suite of techniques designed to reduce model size and computational demands without significantly compromising accuracy. The adoption of specialized toolkits and methodologies is key to achieving this balance.

Model Optimization Techniques

Achieving optimal performance on edge devices hinges on sophisticated model optimization. These techniques are vital for shrinking model footprints and accelerating inference times.

- Quantization: Reduces the precision of model weights and activations (e.g., from 32-bit floating point to 8-bit integers), significantly decreasing model size and computational requirements.

- Pruning: Eliminates redundant or less important connections (weights) in a neural network, leading to sparser, smaller models that require fewer computations.

- Knowledge Distillation: Transfers knowledge from a large, complex “teacher” model to a smaller, more efficient “student” model, allowing the student to achieve comparable performance with fewer parameters.

- Neural Architecture Search (NAS): Automates the design of efficient neural network architectures specifically tailored for edge deployment, often discovering more compact and performant models.

Beyond these techniques, selecting the right hardware platform is equally critical. Edge devices vary widely in their processing capabilities, memory, and power consumption. Matching the optimized model with suitable hardware ensures that the deployed solution is both efficient and cost-effective. Frameworks like TensorFlow Lite and OpenVINO provide crucial support for this entire process, from optimization to deployment.

Hardware Accelerators: The Backbone of Edge Performance

The quest for faster real-time inferences at the edge is significantly bolstered by the advent of specialized hardware accelerators. These components are designed to efficiently execute the intensive computations inherent in machine learning models, far surpassing the capabilities of general-purpose CPUs for AI tasks.

While traditional processors can handle many computational tasks, their architecture is not optimized for the parallel processing demands of neural networks. This is where accelerators come into play, offering a substantial boost in performance per watt and often at a lower cost for dedicated AI operations.

Types of Edge AI Accelerators

A diverse array of hardware accelerators is emerging, each tailored to different needs and performance envelopes, driving the capabilities of Edge AI forward.

- GPUs (Graphics Processing Units): While traditionally used for graphics, GPUs are highly effective for parallel computations required by deep learning, offering significant acceleration for edge devices with higher power budgets.

- TPUs (Tensor Processing Units): Developed by Google, TPUs are custom-built ASICs (Application-Specific Integrated Circuits) specifically designed for neural network workloads, providing exceptional performance for inference and sometimes training.

- NPUs (Neural Processing Units): Many chip manufacturers are integrating NPUs directly into their SoCs (Systems-on-Chip) for mobile and IoT devices, offering dedicated, low-power acceleration for AI tasks.

- FPGAs (Field-Programmable Gate Arrays): FPGAs offer flexibility, allowing developers to customize hardware logic for specific AI models, providing a balance between performance and adaptability.

The strategic selection of the appropriate hardware accelerator is paramount for any Edge AI project. Factors such as power consumption, cost, performance requirements, and the specific type of machine learning model being deployed all influence this decision. As these accelerators continue to evolve, they will further cement the feasibility and widespread adoption of high-performance Edge AI solutions, pushing the boundaries of what’s possible on resource-constrained devices.

Data Privacy and Security Implications at the Edge

One of the most significant advantages of processing data at the edge, rather than in the cloud, is the inherent improvement in data privacy and security. By keeping sensitive information closer to its source and minimizing its transmission over networks, the risk of data breaches and unauthorized access is substantially reduced. This is a critical factor driving the adoption of Edge AI, especially in industries dealing with highly regulated data.

The traditional cloud model often necessitates sending raw, unencrypted data to remote servers, creating multiple points of vulnerability. Edge AI, conversely, processes data locally, allowing for immediate anonymization or aggregation before any data, if necessary, is sent to the cloud. This localized processing significantly strengthens the protection of personal and proprietary information.

Mitigating Risks with Local Processing

Edge AI provides several mechanisms to enhance data privacy and security, addressing concerns that are increasingly prominent in our data-driven world.

- Reduced Data Exposure: Less sensitive data leaves the local device or network, minimizing the attack surface for cyber threats.

- Compliance with Regulations: Facilitates adherence to stringent data protection regulations like GDPR and CCPA by keeping personal data within defined boundaries.

- Enhanced Anonymization: Data can be processed and anonymized on the device before transmission, ensuring that personally identifiable information (PII) is never exposed.

- Independent Operation: Devices can function securely even if disconnected from the internet, protecting data from external threats during network outages.

Furthermore, the distributed nature of Edge AI systems means there is no single point of failure that could compromise all data. Even if one edge device is compromised, the impact is localized, preventing a system-wide breach. The combination of local processing, robust encryption at rest and in transit, and secure hardware enclaves makes Edge AI a powerful ally in the ongoing battle for data privacy and cybersecurity. The future of secure AI heavily relies on these decentralized architectures.

Edge AI in Action: Real-World Applications by 2026

By 2026, Edge AI will be deeply integrated into numerous facets of daily life and industrial operations, moving beyond experimental phases to become a cornerstone of intelligent systems. Its ability to provide real-time insights and autonomous functionalities directly on devices will unlock unprecedented efficiencies and create novel user experiences across diverse sectors.

The practical applications of Edge AI are vast and varied, touching everything from personal gadgets to critical infrastructure. The emphasis is on enabling devices to make smart, immediate decisions without constant reliance on cloud connectivity, thereby enhancing performance, reliability, and privacy.

Transformative Use Cases

Edge AI is already making significant inroads, and by 2026, its impact will be even more pronounced in several key areas:

- Autonomous Vehicles: On-device AI processes sensor data instantly for navigation, obstacle detection, and driver assistance systems, crucial for split-second decision-making.

- Smart Manufacturing: Edge devices monitor production lines for defects, predict equipment failures, and optimize processes in real-time, leading to increased efficiency and reduced downtime.

- Healthcare: Wearable devices and portable diagnostic tools use Edge AI for continuous health monitoring, early disease detection, and personalized treatment recommendations, ensuring immediate alerts and interventions.

- Smart Cities: Edge cameras and sensors analyze traffic flow, manage public safety, and optimize resource allocation without sending all video feeds to the cloud, preserving privacy and reducing network strain.

- Retail: In-store analytics powered by Edge AI monitors inventory, analyzes customer behavior, and personalizes shopping experiences, all while maintaining transactional security and privacy.

These examples illustrate how Edge AI is not just an incremental improvement but a fundamental shift in how intelligence is delivered and consumed. The ability to perform complex machine learning inferences at the point of data generation empowers devices to be truly smart, responsive, and secure, paving the way for a more integrated and intelligent world by 2026.

Overcoming Challenges in Edge AI Adoption

While the benefits of Edge AI are compelling, its widespread adoption is not without hurdles. Developers and organizations must navigate a complex landscape of technical, economic, and operational challenges to fully realize the potential of on-device machine learning. Addressing these issues proactively is crucial for successful implementation and scaling.

The transition from traditional cloud-based AI to edge deployments requires a re-evaluation of existing workflows, skill sets, and infrastructure. It’s not simply a matter of porting models; it involves a holistic approach to design, development, and maintenance within the constraints of edge environments.

Key Challenges and Solutions

Several significant challenges need to be addressed to accelerate Edge AI adoption and ensure its long-term viability.

- Resource Constraints: Edge devices often have limited computational power, memory, and battery life. Solutions involve aggressive model optimization (quantization, pruning), specialized hardware accelerators, and efficient software frameworks tailored for low-resource environments.

- Model Development and Deployment Complexity: Developing and deploying models for diverse edge hardware can be intricate. Standardized toolkits (e.g., TensorFlow Lite, OpenVINO), automated deployment pipelines, and MLOps practices customized for edge are essential.

- Data Management and Orchestration: Managing data synchronization, model updates, and device orchestration across a vast network of edge devices is complex. Centralized management platforms, federated learning approaches, and robust over-the-air (OTA) update mechanisms are key.

- Security and Privacy: While Edge AI enhances privacy, securing individual devices from tampering and ensuring data integrity remains a challenge. Hardware-based security, secure boot processes, and strong encryption protocols are vital.

- Lack of Standardized Ecosystems: The fragmented nature of edge hardware and software ecosystems can hinder interoperability. Industry collaborations and open standards are emerging to foster a more unified development environment.

Overcoming these challenges requires continuous innovation, collaboration across the industry, and the development of robust tools and methodologies. As these solutions mature, the path for broader and more impactful Edge AI adoption will become clearer, solidifying its role as a transformative technology by 2026 and beyond.

| Key Aspect | Brief Description |

|---|---|

| Real-time Inferences | Achieving 30% faster AI model predictions directly on devices by 2026. |

| Model Optimization | Techniques like quantization and pruning to enable efficient on-device execution. |

| Hardware Acceleration | Utilization of GPUs, TPUs, and NPUs for enhanced edge AI performance. |

| Data Privacy | Processing data locally significantly reduces exposure and enhances security. |

Frequently Asked Questions About Edge AI

The primary benefit of Edge AI is enabling real-time inference directly on devices, significantly reducing latency and improving responsiveness. This local processing minimizes reliance on cloud connectivity, crucial for applications requiring immediate decision-making and enhanced data privacy.

Edge AI enhances data privacy and security by processing sensitive information locally on the device, rather than transmitting it to the cloud. This reduces data exposure, minimizes the risk of breaches, and helps comply with strict data protection regulations like GDPR and CCPA.

Efficient Edge AI relies heavily on specialized hardware accelerators. These include GPUs, TPUs, and NPUs, which are optimized for parallel processing tasks inherent in machine learning models. These accelerators ensure faster and more power-efficient inference on resource-constrained edge devices.

Implementing Edge AI presents challenges such as resource constraints on devices, the complexity of model development and deployment for diverse hardware, and efficient data management across distributed systems. Overcoming these requires advanced optimization techniques and robust MLOps practices.

By 2026, industries like autonomous vehicles, smart manufacturing, healthcare, and smart cities will experience significant transformations from Edge AI. These sectors benefit immensely from real-time decision-making, enhanced privacy, and reduced reliance on constant cloud connectivity, driving efficiency and innovation.

Conclusion

The trajectory of Edge AI for Machine Learning is set to redefine technological interactions by 2026, promising a future where devices operate with unprecedented autonomy and speed. Achieving 30% faster real-time inferences is not merely an ambitious goal but a tangible outcome of continuous innovation in model optimization, specialized hardware, and robust deployment strategies. This shift heralds a new era of intelligent systems that prioritize efficiency, privacy, and reliability, fundamentally changing how we interact with the digital and physical worlds. The ongoing advancements and strategic implementations will solidify Edge AI as a cornerstone of the next generation of artificial intelligence, empowering devices to make smarter, faster decisions right where the data originates.