Ethical AI Audits: 5 Steps for US Organizations to Ensure Fairness by 2025

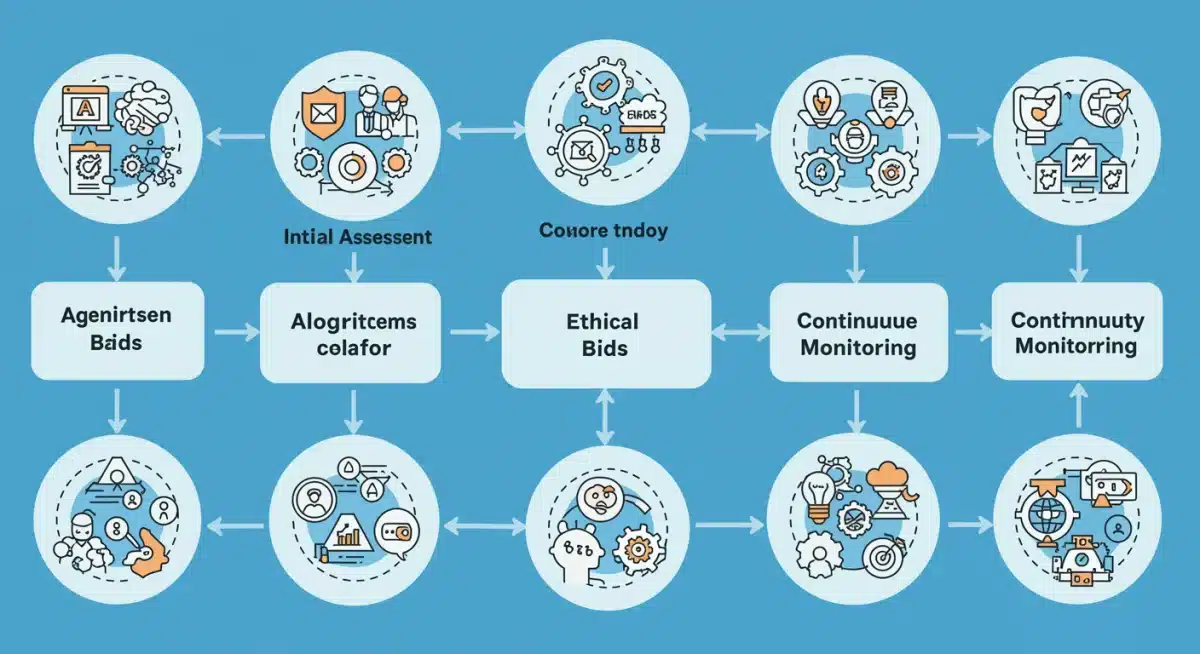

This guide provides a comprehensive framework for US organizations to conduct ethical AI audits, detailing five essential steps to ensure fairness, transparency, and accountability in AI systems by January 2025.

The rapid evolution of artificial intelligence demands a proactive approach to ensure its ethical deployment. For US organizations, understanding and implementing ethical AI audits isn’t just good practice; it’s becoming a regulatory imperative. By January 2025, robust frameworks will be crucial for maintaining public trust and operational integrity.

Understanding the imperative for ethical AI audits

Artificial intelligence is transforming industries at an unprecedented pace, offering immense potential for innovation and efficiency. However, this rapid advancement also brings significant ethical challenges, particularly concerning fairness, accountability, and transparency. In the United States, regulators and consumers are increasingly scrutinizing how AI systems are developed and deployed, making ethical AI audits a critical component of responsible AI governance.

The urgency for these audits stems from several factors, including the potential for algorithmic bias, data privacy concerns, and the need to comply with emerging regulations. Organizations that fail to address these issues risk not only reputational damage but also significant legal and financial penalties. A well-executed ethical AI audit can identify vulnerabilities, mitigate risks, and build trust with stakeholders, ensuring that AI systems serve humanity positively.

The rising tide of AI regulation

The regulatory landscape for AI in the US is swiftly evolving. While a single, comprehensive federal law is still in development, various agencies are issuing guidance and standards. States are also introducing their own legislation, creating a complex web of compliance requirements. Organizations must stay abreast of these developments to avoid non-compliance. These regulations often focus on:

- Data privacy: Protecting sensitive personal information used in AI models.

- Algorithmic bias: Ensuring AI systems do not perpetuate or amplify societal biases.

- Transparency: Making AI decision-making processes understandable and explainable.

- Accountability: Establishing clear lines of responsibility for AI system outcomes.

Understanding these regulatory pressures is the first step toward building an effective ethical AI audit strategy. It underscores why organizations need to move beyond mere technical performance metrics to encompass broader societal impacts, aligning their AI initiatives with prevailing ethical standards and legal obligations.

Step 1: establish a robust ethical AI governance framework

Before diving into the technicalities of an audit, US organizations must first lay a strong foundation with a comprehensive ethical AI governance framework. This framework acts as the guiding principle for all AI initiatives, ensuring that ethical considerations are embedded from conception to deployment and beyond. It’s about establishing clear policies, roles, and responsibilities that champion fairness and transparency.

A well-defined governance framework provides the structure necessary to manage ethical risks effectively. It ensures that every stakeholder, from data scientists to executive leadership, understands their role in upholding ethical AI principles. Without this foundational layer, any audit conducted will likely be a reactive measure rather than a proactive safeguard against potential harms.

Key components of an ethical AI governance framework

Building this framework involves several critical elements that work in concert to create a culture of responsible AI development. These components are not static; they require continuous review and adaptation as AI technology evolves and new ethical challenges emerge.

- Ethical principles and values: Define core ethical principles, such as fairness, transparency, accountability, and privacy, that align with the organization’s mission and societal expectations.

- Policy development: Create clear policies for data collection, model development, deployment, and monitoring that reflect these ethical principles.

- Roles and responsibilities: Assign specific roles, such as an AI ethics committee or a dedicated ethics officer, to oversee the implementation and enforcement of ethical guidelines.

- Training and education: Implement mandatory training programs for all personnel involved in AI development and deployment to foster a deep understanding of ethical AI practices.

Establishing this framework ensures that ethical considerations are not an afterthought but an integral part of the AI lifecycle. It prepares the organization for the subsequent audit steps by providing a clear benchmark against which AI systems can be evaluated, fostering a proactive approach to responsible AI. The goal is to embed ethical thinking into the very fabric of AI development and operation.

Step 2: identify and assess AI systems for ethical risk

Once a governance framework is in place, the next crucial step in conducting ethical AI audits is to systematically identify and assess all AI systems within the organization for potential ethical risks. Not all AI systems carry the same level of risk; some may have minimal societal impact, while others, particularly those involved in critical decision-making processes like hiring, lending, or healthcare, can significantly affect individuals’ lives. A thorough risk assessment allows organizations to prioritize their audit efforts and allocate resources effectively.

This step requires a comprehensive inventory of all AI applications, including those developed in-house, acquired from third-party vendors, or embedded within larger software solutions. For each identified system, a detailed evaluation of its purpose, data sources, algorithmic design, and deployment context is essential. Understanding these elements helps in pinpointing areas where ethical concerns might arise, such as bias, lack of transparency, or privacy infringements.

Categorizing AI systems by risk level

To streamline the assessment process, organizations should categorize AI systems based on their potential ethical impact. This categorization helps in determining the depth and frequency of audits required for each system. A common approach involves classifying systems into high, medium, and low-risk categories.

- High-risk systems: These are AI applications that significantly impact individuals’ fundamental rights, safety, or well-being. Examples include AI used in employment decisions, credit scoring, criminal justice, or medical diagnoses.

- Medium-risk systems: AI applications with a moderate potential for harm, such as personalized marketing algorithms or customer service chatbots that handle sensitive information.

- Low-risk systems: AI systems with minimal or negligible ethical impact, often those used for internal operational efficiencies without direct public interaction or critical decision-making.

The assessment should also consider the data used by these systems. Biased or unrepresentative training data is a primary source of algorithmic unfairness. Therefore, evaluating data provenance, quality, and representativeness is paramount. By systematically identifying and categorizing AI systems, organizations can create a strategic roadmap for their ethical AI audit efforts, ensuring that the most critical systems receive the necessary scrutiny to uphold fairness and accountability.

Step 3: conduct technical and process audits for bias and transparency

With AI systems identified and categorized, the next critical phase in ethical AI audits involves conducting detailed technical and process audits. This step moves beyond theoretical frameworks to practical examination, scrutinizing both the underlying algorithms and the operational processes that govern their development and deployment. The primary goals are to detect and mitigate algorithmic bias and to enhance the transparency and explainability of AI decision-making.

Technical audits involve a deep dive into the AI model itself. This includes analyzing the training data for representativeness and potential biases, evaluating the algorithm’s performance across different demographic groups, and assessing its robustness against adversarial attacks. Process audits, on the other hand, examine the human-in-the-loop aspects, including data collection methodologies, model development practices, validation procedures, and post-deployment monitoring protocols. Both are essential for a holistic understanding of an AI system’s ethical posture.

Techniques for detecting and mitigating bias

Identifying bias requires a combination of quantitative and qualitative methods. It’s not always straightforward, as bias can manifest in subtle ways, from skewed data distributions to unintended correlations the model learns. Effective mitigation strategies often involve a multi-faceted approach.

- Data bias analysis: Use statistical methods to identify underrepresentation or overrepresentation of certain groups in training data. Techniques like demographic parity, equal opportunity, and disparate impact analysis are crucial here.

- Algorithmic fairness metrics: Apply various fairness metrics (e.g., equalized odds, predictive parity) to assess if the model performs equally well across different sensitive attributes. Utilize tools that can automatically detect and visualize bias.

- Explainable AI (XAI) tools: Employ XAI techniques such as LIME (Local Interpretable Model-agnostic Explanations) or SHAP (SHapley Additive exPlanations) to understand how models arrive at their decisions, helping to uncover hidden biases or illogical reasoning.

- Bias mitigation techniques: Implement strategies during model training (e.g., re-weighting, adversarial debiasing) or post-processing (e.g., threshold adjustment) to reduce identified biases.

Ensuring transparency involves documenting every stage of the AI lifecycle, from data sourcing to model deployment, making it possible to trace decisions and understand their impact. This step is resource-intensive but indispensable for building trustworthy AI systems that adhere to ethical principles and meet regulatory expectations.

Step 4: implement remediation strategies and continuous monitoring

Discovering ethical vulnerabilities during an audit is only half the battle; the true impact comes from effectively implementing remediation strategies and establishing robust continuous monitoring. This step ensures that identified issues are not only addressed but also prevented from recurring, fostering a dynamic and proactive approach to ethical AI. Remediation involves making necessary adjustments to data, algorithms, or processes, while continuous monitoring provides an ongoing feedback loop to maintain ethical integrity over time.

Remediation efforts should be systematic and well-documented. For instance, if data bias is detected, remediation might involve collecting more representative data, re-weighting existing datasets, or implementing data augmentation techniques. If algorithmic bias is present, adjustments to model architecture, fairness-aware training algorithms, or post-processing techniques may be necessary. These changes must be thoroughly tested and validated to ensure they effectively mitigate the identified risks without introducing new ones.

Establishing a framework for ongoing ethical oversight

Continuous monitoring is paramount because AI systems are not static; they evolve as they interact with new data and environments. What is considered fair and transparent today might not be tomorrow, given societal shifts and data drifts. Therefore, organizations need to embed mechanisms for perpetual ethical oversight.

- Automated bias detection: Deploy automated tools that continuously monitor AI system outputs for deviations in fairness metrics or unexpected performance disparities across demographic groups.

- Performance drift alerts: Set up alerts for changes in model performance or data distributions that could indicate emerging biases or ethical issues.

- Regular re-audits: Schedule periodic full ethical AI audits, especially for high-risk systems, to re-evaluate their compliance with ethical principles and regulatory requirements.

- Feedback mechanisms: Create channels for users, affected individuals, and internal stakeholders to report concerns or observed unfairness in AI system behavior.

By integrating remediation with continuous monitoring, US organizations can ensure that their AI systems remain ethically sound and compliant. This iterative process of detection, correction, and ongoing vigilance is fundamental to building and sustaining trust in AI technologies. It reinforces the commitment to responsible innovation and safeguards against potential harm throughout the AI lifecycle.

Step 5: documentation, reporting, and stakeholder communication

The final, yet equally critical, step in the ethical AI audit process for US organizations is comprehensive documentation, transparent reporting, and effective stakeholder communication. This stage ensures accountability, builds trust, and demonstrates compliance with internal policies and external regulations. Without clear records and open communication, even the most thorough audit and effective remediation efforts can fall short of their intended impact.

Documentation should cover every aspect of the audit: the scope, methodologies used, findings, remediation actions taken, and the results of continuous monitoring. This detailed record serves as an invaluable resource for internal review, regulatory compliance, and future audit planning. It also provides a clear narrative of the organization’s commitment to ethical AI practices, offering defensibility in the event of scrutiny.

Communicating ethical AI commitments

Transparency in reporting is not just about internal record-keeping; it extends to communicating findings and commitments to a broader audience. This includes regulatory bodies, customers, employees, and the general public. Effective communication can significantly enhance an organization’s reputation and foster greater trust in its AI initiatives.

- Internal reports: Generate detailed reports for executive leadership and relevant departments, highlighting key findings, risks, and recommended actions.

- Regulatory compliance reports: Prepare specific reports tailored to meet the requirements of relevant US regulatory bodies, demonstrating adherence to emerging AI ethics guidelines and laws.

- Public transparency statements: Consider issuing public statements or publishing summaries of ethical AI audit findings, particularly for high-impact AI systems, to showcase commitment to fairness and accountability.

- Stakeholder engagement: Hold regular discussions with key stakeholders, including advocacy groups and AI ethics experts, to gather feedback and continuously refine ethical AI practices.

By diligently documenting their ethical AI audit processes and openly communicating their efforts, US organizations can solidify their standing as responsible innovators. This final step transforms the audit from a mere technical exercise into a powerful tool for governance, risk management, and reputation building, ensuring that AI serves society ethically and equitably by January 2025 and beyond.

| Key Step | Brief Description |

|---|---|

| Establish Governance | Define ethical principles, policies, and roles for responsible AI development. |

| Assess AI Systems | Identify and categorize AI applications by ethical risk level and potential impact. |

| Audit for Bias & Transparency | Conduct technical and process reviews to detect and address algorithmic bias and explainability issues. |

| Remediate & Monitor | Implement solutions for identified issues and establish continuous oversight mechanisms. |

Frequently asked questions about ethical AI audits

Ethical AI audits are crucial due to increasing regulatory scrutiny, public demand for fairness, and the potential for significant legal and reputational risks associated with biased or non-transparent AI systems. They ensure compliance and build trust in AI deployments.

Algorithmic bias occurs when AI systems produce unfair or discriminatory outcomes. Audits address this by analyzing training data for representativeness, applying fairness metrics, and using explainable AI tools to identify and mitigate biases during development and deployment.

AI governance provides the foundational framework for ethical audits. It establishes the principles, policies, roles, and responsibilities that guide AI development and deployment, ensuring ethical considerations are integrated from the outset, making audits more effective.

The frequency depends on the AI system’s risk level and dynamism. High-risk systems with significant societal impact should undergo regular, perhaps annual, full audits, complemented by continuous monitoring, while lower-risk systems may require less frequent checks.

Transparent reporting builds trust with stakeholders, demonstrates an organization’s commitment to ethical AI, and aids in regulatory compliance. It provides clear documentation of audit processes, findings, and remediation efforts, enhancing accountability and reputation.

Conclusion

As US organizations navigate the complex landscape of artificial intelligence, embracing ethical AI audits is no longer optional but a strategic imperative. By following these five comprehensive steps – establishing robust governance, identifying and assessing risks, conducting thorough technical and process audits, implementing remediation and continuous monitoring, and ensuring transparent documentation and communication – organizations can proactively safeguard against ethical pitfalls. This systematic approach not only ensures fairness and compliance by January 2025 but also fosters a culture of responsible innovation, building lasting trust with customers, regulators, and the broader society. The future of AI hinges on our collective commitment to ethical deployment, making these audits a cornerstone of sustainable technological advancement.