Mitigating AI Risk: A 5-Step Ethical Framework for U.S. Organizations in 2026

Mitigating AI Risk: A 5-Step Ethical Framework for U.S. Organizations in 2026

The rapid advancement of Artificial Intelligence (AI) presents unprecedented opportunities for innovation, efficiency, and growth across virtually every sector. However, this transformative power comes with a significant caveat: the potential for substantial risks. As U.S. organizations increasingly integrate AI into their operations, the imperative to manage these risks ethically and responsibly has never been more critical. The year 2026 looms as a crucial horizon, with evolving regulatory landscapes and heightened public scrutiny demanding proactive measures. This article introduces a comprehensive AI Ethical Framework designed specifically for U.S. organizations to navigate this complex terrain, ensuring not only compliance but also the cultivation of trust and sustainable AI practices.

The ethical implications of AI are vast, spanning issues from algorithmic bias and data privacy to job displacement and autonomous decision-making. Without a robust AI Ethical Framework, organizations risk legal repercussions, reputational damage, and a loss of consumer confidence. Furthermore, the U.S. government, through agencies and initiatives like the National Institute of Standards and Technology (NIST) AI Risk Management Framework, is actively shaping the future of AI governance. Adopting a proactive stance on AI ethics is no longer a luxury but a strategic necessity for U.S. organizations aiming to thrive in the AI-driven future.

Our 5-step AI Ethical Framework provides a structured approach to identifying, assessing, mitigating, and monitoring AI risks. It is designed to be adaptable, scalable, and integrated into existing organizational processes, fostering a culture of responsible AI development and deployment. By embracing this framework, U.S. organizations can not only mitigate potential harms but also unlock the full, positive potential of AI, ensuring its benefits are realized equitably and transparently.

The Urgency of an AI Ethical Framework for U.S. Organizations

The landscape for AI in the United States is characterized by both rapid technological advancement and a burgeoning regulatory environment. While the U.S. has historically favored innovation-driven approaches, there’s a growing consensus on the need for responsible AI governance. Several factors underscore the urgency for U.S. organizations to implement a robust AI Ethical Framework:

Evolving Regulatory Landscape

While a single, overarching federal AI regulation is still in development, various agencies are issuing guidance and frameworks. NIST’s AI Risk Management Framework (AI RMF) provides a voluntary but influential guide for managing AI risks. State-level initiatives, coupled with sector-specific regulations (e.g., in healthcare and finance), create a complex compliance mosaic. Organizations failing to anticipate and adapt to these evolving rules risk non-compliance, hefty fines, and operational disruptions. A well-defined AI Ethical Framework serves as an internal compass, guiding organizations toward practices that are likely to align with future regulations.

Public Trust and Reputation

Public awareness and concern about AI’s ethical implications are rising. Incidents of biased algorithms, privacy breaches, or autonomous systems making questionable decisions can quickly erode public trust. For U.S. organizations, maintaining a positive reputation and building consumer confidence are paramount. An ethical approach to AI demonstrates a commitment to societal well-being, enhancing brand value and fostering long-term customer loyalty. Conversely, a failure in AI ethics can lead to significant reputational damage that is difficult and costly to repair.

Competitive Advantage and Innovation

Paradoxically, an AI Ethical Framework can be a source of competitive advantage. Organizations known for their responsible AI practices are more likely to attract top talent, secure partnerships, and gain broader acceptance for their AI-powered products and services. Ethical considerations can also spur innovation, leading to the development of more robust, fair, and transparent AI systems that offer superior value and address a wider range of user needs. In a crowded market, ethical differentiation can be a powerful tool.

Stakeholder Demands

Beyond customers and regulators, employees, investors, and civil society organizations are increasingly demanding ethical AI practices. Employees want to work for companies that align with their values. Investors are incorporating ESG (Environmental, Social, and Governance) factors into their decision-making, with AI ethics falling squarely under the ‘S’ and ‘G’ components. Ignoring these stakeholder demands can lead to internal dissent, investor withdrawal, and activist campaigns, all of which can negatively impact an organization’s stability and growth.

The 5-Step AI Ethical Framework for U.S. Organizations

This framework is designed to be cyclical and iterative, emphasizing continuous improvement and adaptation. Each step builds upon the last, fostering a holistic approach to responsible AI. Implementing this AI Ethical Framework requires commitment from leadership and integration across all relevant departments.

Step 1: Establish Ethical AI Governance and Leadership

The foundation of any effective AI Ethical Framework is strong governance. This step involves creating the organizational structures, policies, and leadership commitment necessary to guide ethical AI development and deployment. Without clear leadership and accountability, ethical intentions can easily falter.

Key Actions:

- Form an AI Ethics Committee/Council: This cross-functional body should include representatives from legal, IT, data science, product development, HR, and ethics. Its mandate is to establish ethical guidelines, review AI projects, and provide oversight.

- Develop an AI Code of Conduct/Principles: Articulate clear, actionable ethical principles (e.g., fairness, transparency, accountability, privacy, safety) that align with organizational values and anticipated regulatory requirements. These principles should serve as the bedrock of your AI Ethical Framework.

- Assign Roles and Responsibilities: Clearly define who is responsible for AI ethics at different stages of the AI lifecycle, from data collection to model deployment and monitoring. This includes establishing a Chief AI Ethics Officer or similar role if appropriate for the organization’s scale.

- Integrate Ethics into AI Strategy: Ensure ethical considerations are embedded from the initial conceptualization of AI projects, not as an afterthought. This means ethical implications are part of the business case and design phases.

- Secure Executive Buy-in: Leadership must champion the AI Ethical Framework, allocate necessary resources, and demonstrate a visible commitment to ethical AI practices.

Establishing robust governance ensures that ethical considerations are systematically addressed and that there is a clear pathway for decision-making and accountability. This is the bedrock upon which a resilient AI Ethical Framework is built, preparing U.S. organizations for the challenges of 2026 and beyond.

Step 2: Conduct Comprehensive AI Risk Assessment and Impact Analysis

Once governance is in place, the next critical step for any AI Ethical Framework is to systematically identify and understand the potential risks and impacts of AI systems. This goes beyond technical vulnerabilities to encompass societal, ethical, and human rights considerations.

Key Actions:

- Identify AI Use Cases and Contexts: Catalog all current and planned AI applications within the organization. Understand the domain, data sources, decision-making processes, and potential beneficiaries and affected parties.

- Perform Ethical Impact Assessments (EIAs): For each AI system, conduct a thorough assessment to identify potential biases, privacy infringements, fairness issues, safety concerns, and broader societal impacts. This should be an integral part of your AI Ethical Framework.

- Quantify and Qualify Risks: Evaluate the likelihood and severity of identified risks. This includes assessing the potential for discrimination, loss of autonomy, misinformation, or unfair outcomes. Utilize frameworks like NIST AI RMF to categorize and prioritize risks.

- Data Audits and Provenance: Scrutinize data sources for bias, representativeness, and privacy implications. Understand how data is collected, processed, and used by AI models, ensuring compliance with data protection regulations (e.g., CCPA, state privacy laws).

- Scenario Planning: Develop hypothetical scenarios to stress-test AI systems for unintended consequences, ethical dilemmas, and potential misuse. This proactive approach helps uncover risks that might not be immediately apparent.

This step ensures that U.S. organizations have a clear-eyed view of the potential downsides of their AI initiatives, allowing for informed decision-making and targeted mitigation strategies. A thorough risk assessment is a cornerstone of a responsible AI Ethical Framework.

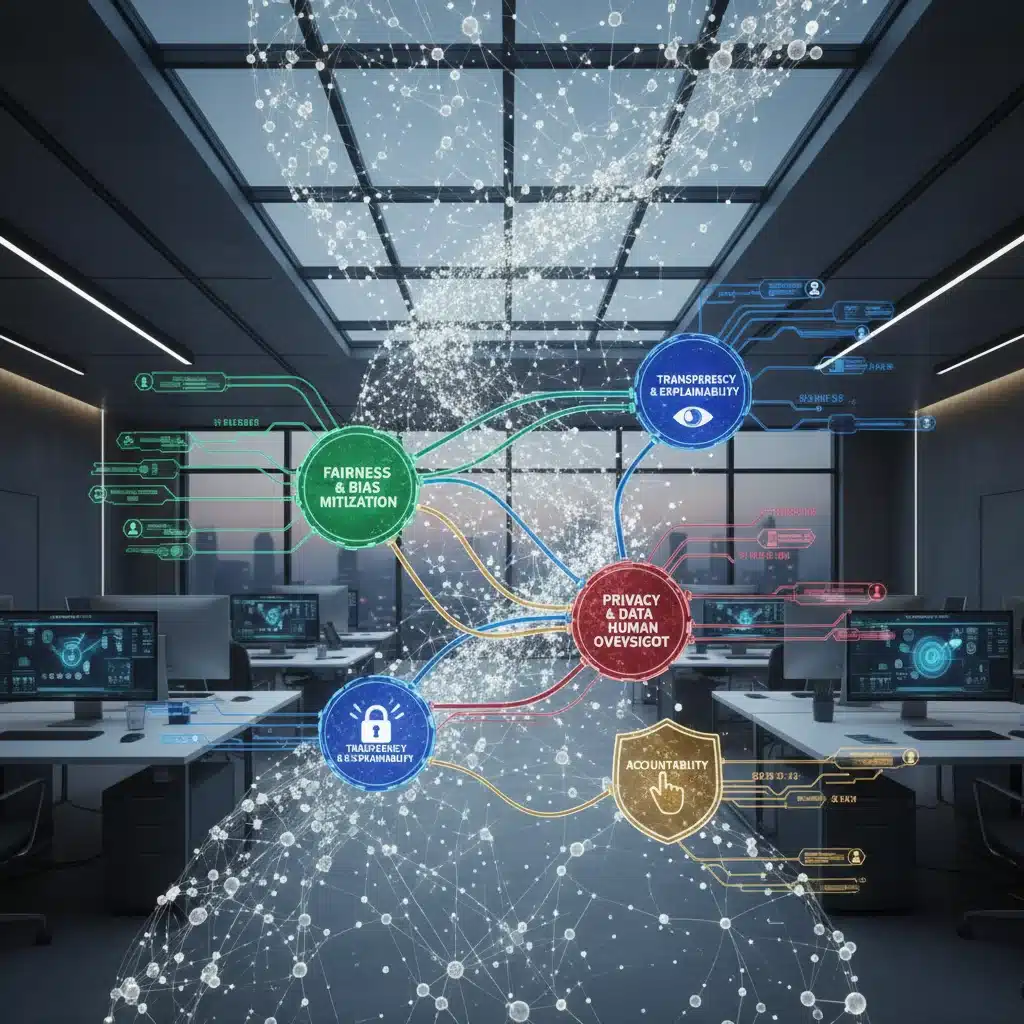

Step 3: Implement Ethical AI Design and Development Principles

With risks identified, the focus shifts to embedding ethical considerations directly into the design and development lifecycle of AI systems. This proactive approach is far more effective than trying to bolt on ethics as an afterthought. This step is where the theoretical principles of the AI Ethical Framework translate into practical engineering.

Key Actions:

- Privacy-by-Design and Security-by-Design: Incorporate privacy-enhancing technologies and robust security measures from the outset. This includes data minimization, anonymization techniques, and secure data storage and processing.

- Fairness and Bias Mitigation: Implement strategies to detect, measure, and mitigate algorithmic bias. This involves diverse training data, fairness metrics, and bias detection tools. Regularly audit models for disparate impact on protected groups.

- Transparency and Explainability (XAI): Design AI systems to be as transparent and explainable as possible. Provide clear documentation on how models work, their limitations, and the rationale behind their decisions, especially in high-stakes applications. This enhances the efficacy of the AI Ethical Framework.

- Human Oversight and Control: Ensure that human decision-makers retain ultimate control and oversight, especially in critical applications. Design human-in-the-loop mechanisms for review, intervention, and override where necessary.

- Robustness and Reliability: Develop AI systems that are resilient to adversarial attacks, errors, and unexpected inputs. Prioritize safety and reliability, particularly for AI applications in critical infrastructure or public safety.

By integrating these principles into the technical development process, U.S. organizations can build AI systems that are not only effective but also inherently more ethical and trustworthy, aligning with the core tenets of a strong AI Ethical Framework.

Step 4: Foster Transparency, Communication, and Stakeholder Engagement

An ethical AI strategy cannot exist in a vacuum. Transparent communication and active engagement with stakeholders are crucial for building trust, gathering diverse perspectives, and ensuring accountability. This step emphasizes the outward-facing aspects of the AI Ethical Framework.

Key Actions:

- Communicate AI Principles and Policies: Clearly articulate the organization’s AI ethical principles and how they are being implemented to employees, customers, and the public. This can be done through public statements, reports, and dedicated website sections.

- Provide Explainability to Users: When AI systems make decisions that affect individuals, provide clear, understandable explanations for those decisions. This empowers users and allows for recourse if errors occur.

- Establish Feedback Mechanisms: Create channels for users, employees, and the public to provide feedback, report concerns, or challenge AI-driven outcomes. This demonstrates responsiveness and commitment to continuous improvement within your AI Ethical Framework.

- Engage with External Experts and Civil Society: Collaborate with ethicists, academics, and advocacy groups to gain external perspectives, validate ethical approaches, and stay abreast of emerging concerns. This broadens the scope and effectiveness of your AI Ethical Framework.

- Educate and Train Employees: Implement comprehensive training programs for all employees involved in AI development, deployment, or decision-making. This ensures a shared understanding of ethical responsibilities and best practices.

Open dialogue and transparent practices are essential for democratizing AI and ensuring that its development serves broader societal interests. This step reinforces the idea that an AI Ethical Framework is a shared responsibility.

Step 5: Implement Continuous Monitoring, Auditing, and Improvement

The ethical landscape of AI is dynamic, with new challenges and solutions constantly emerging. Therefore, an effective AI Ethical Framework must be iterative, incorporating continuous monitoring, regular auditing, and a commitment to ongoing improvement. This final step closes the loop, ensuring long-term ethical integrity.

Key Actions:

- Develop AI Performance and Ethics Dashboards: Create metrics and dashboards to continuously monitor AI system performance, fairness metrics, bias detection, and adherence to ethical guidelines.

- Regular Ethical Audits: Conduct periodic internal and external audits of AI systems to verify compliance with ethical principles, policies, and regulatory requirements. These audits should assess both the technical implementation and the societal impact.

- Incident Response and Remediation: Establish clear protocols for responding to ethical breaches, unintended consequences, or system failures. This includes investigation, remediation, and transparent communication.

- Stay Abreast of Research and Regulations: Continuously monitor advancements in AI ethics research, best practices, and evolving U.S. and international regulations. Adapt the AI Ethical Framework as needed to remain current and effective.

- Feedback Loop Integration: Ensure that insights gained from monitoring, audits, and stakeholder feedback are fed back into the design, development, and governance processes (Steps 1-3) for continuous improvement. This makes the AI Ethical Framework a living document.

This continuous cycle of monitoring and improvement ensures that the AI Ethical Framework remains relevant, effective, and responsive to the evolving challenges and opportunities presented by AI. For U.S. organizations, this iterative approach is key to long-term success and ethical leadership in the AI era.

Challenges and Considerations for U.S. Organizations

Implementing an AI Ethical Framework is not without its challenges. U.S. organizations face unique hurdles that must be addressed for successful adoption:

- Resource Allocation: Establishing and maintaining an ethical AI program requires significant investment in personnel, technology, and training. Organizations must be prepared to allocate these resources effectively.

- Data Governance Complexity: Managing diverse and often sensitive datasets while ensuring privacy, fairness, and compliance with various state and federal regulations (like HIPAA, CCPA, etc.) is a major undertaking.

- Talent Gap: There is a shortage of professionals with expertise in both AI technology and ethics. Developing internal capabilities or partnering with external experts is crucial.

- Navigating Evolving Regulations: The lack of a unified federal AI regulation in the U.S. creates uncertainty. Organizations must maintain flexibility and adaptability in their AI Ethical Framework to respond to new mandates.

- Measuring Ethical Impact: Quantifying and measuring the ethical impact of AI systems can be challenging. Developing robust metrics and evaluation methodologies is an ongoing area of focus.

- Balancing Innovation and Risk: Striking the right balance between fostering rapid innovation and mitigating potential risks is a constant tension. An effective AI Ethical Framework helps organizations make informed trade-offs.

Addressing these challenges requires a strategic, long-term commitment from U.S. organizations and a willingness to adapt their processes and culture. The benefits of a well-implemented AI Ethical Framework, however, far outweigh these hurdles.

The Future of AI Ethics in the U.S. by 2026

By 2026, the landscape of AI ethics in the U.S. is expected to be significantly more defined. We anticipate:

- Increased Regulatory Clarity: While a single federal law might still be elusive, more concrete guidelines and sector-specific regulations are likely to emerge, potentially building on frameworks like NIST’s.

- Standardization of Ethical AI Practices: Best practices for bias detection, explainability, and privacy will become more standardized, driven by industry consensus and regulatory pressure.

- Greater Demand for Ethical AI Professionals: The need for AI ethicists, governance specialists, and responsible AI engineers will grow exponentially.

- Consumer Expectation for Ethical AI: Users will increasingly expect transparency and fairness from AI systems, and will favor organizations that demonstrate strong ethical commitments.

- Litigation and Enforcement: We may see an increase in legal challenges related to AI bias, discrimination, and privacy violations, making robust ethical frameworks even more critical for risk mitigation.

U.S. organizations that proactively adopt and integrate a comprehensive AI Ethical Framework now will be best positioned to thrive in this evolving environment, safeguarding their future and contributing to a more responsible AI ecosystem.

Conclusion: Building a Responsible AI Future

The development and deployment of AI represent a pivotal moment for U.S. organizations. While the potential rewards are immense, the ethical responsibilities are equally significant. Implementing a robust AI Ethical Framework is no longer an option but a strategic imperative for organizations aiming to navigate the complexities of AI by 2026 and beyond.

This 5-step framework — encompassing governance, risk assessment, ethical design, transparency, and continuous improvement — provides a clear roadmap for U.S. organizations to build and deploy AI systems that are not only innovative and efficient but also fair, transparent, and accountable. By prioritizing ethical considerations from inception to deployment and beyond, organizations can mitigate risks, build stakeholder trust, enhance their reputation, and ultimately unlock the full, positive potential of artificial intelligence.

The proactive adoption of an AI Ethical Framework will differentiate leaders in the AI era, ensuring that technology serves humanity’s best interests while fostering sustainable growth and responsible innovation. The time to act is now, to shape a future where AI is a force for good, guided by strong ethical principles and robust governance.