PyTorch vs TensorFlow 2026: Large-Scale ML in the US

PyTorch vs TensorFlow 2026: Navigating Large-Scale Machine Learning in the US

The year 2026 marks a pivotal moment in the evolution of artificial intelligence, particularly in the realm of large-scale machine learning. As data volumes explode and computational power continues its relentless ascent, the choice of the right deep learning framework has become more critical than ever, especially for organizations operating within the dynamic and competitive landscape of the United States. At the heart of this decision lies the perennial debate: PyTorch vs TensorFlow 2026. These two powerhouses have dominated the AI scene for years, each boasting a dedicated community, robust capabilities, and a distinct philosophy. Understanding their nuances is not merely an academic exercise; it’s a strategic imperative for any entity aiming to innovate and scale in the US market.

This comprehensive comparison delves deep into the current state of PyTorch and TensorFlow in 2026, examining their architectural differences, performance benchmarks, community support, ease of use, and suitability for various large-scale machine learning projects. We will explore how each framework has evolved to meet the demands of modern AI, from research and development to deployment and production. Whether you’re a data scientist, an ML engineer, or a decision-maker in a tech enterprise, this article aims to provide clarity and actionable insights to guide your framework selection in the coming years.

The Evolving Landscape of AI Frameworks in 2026

The machine learning ecosystem in 2026 is characterized by rapid advancements, increasing complexity, and an insatiable demand for scalable solutions. Both PyTorch and TensorFlow have undergone significant transformations to remain at the forefront. TensorFlow, backed by Google, has traditionally been the go-to for production-ready deployments, boasting a mature ecosystem and extensive tooling for various stages of the ML lifecycle. PyTorch, championed by Facebook (Meta), has garnered immense popularity in the research community due to its flexibility and Pythonic interface, making rapid prototyping and experimentation a breeze. The crucial question for many organizations in the US is no longer just about which framework is ‘better,’ but rather which one aligns more closely with their specific project requirements, team expertise, and long-term strategic goals for large-scale machine learning.

The battle for dominance, or perhaps more accurately, co-existence, between PyTorch and TensorFlow 2026, is not just about features. It encompasses factors like the availability of skilled talent, integration with cloud platforms, support for specialized hardware, and the robustness of their respective deployment pipelines. Large-scale machine learning projects, by definition, involve massive datasets, complex models, and often, distributed training. Therefore, the ability of a framework to handle these challenges efficiently and reliably is paramount. Let’s dive into the core characteristics that define each framework in 2026.

PyTorch in 2026: Flexibility and Research Agility for Large-Scale Projects

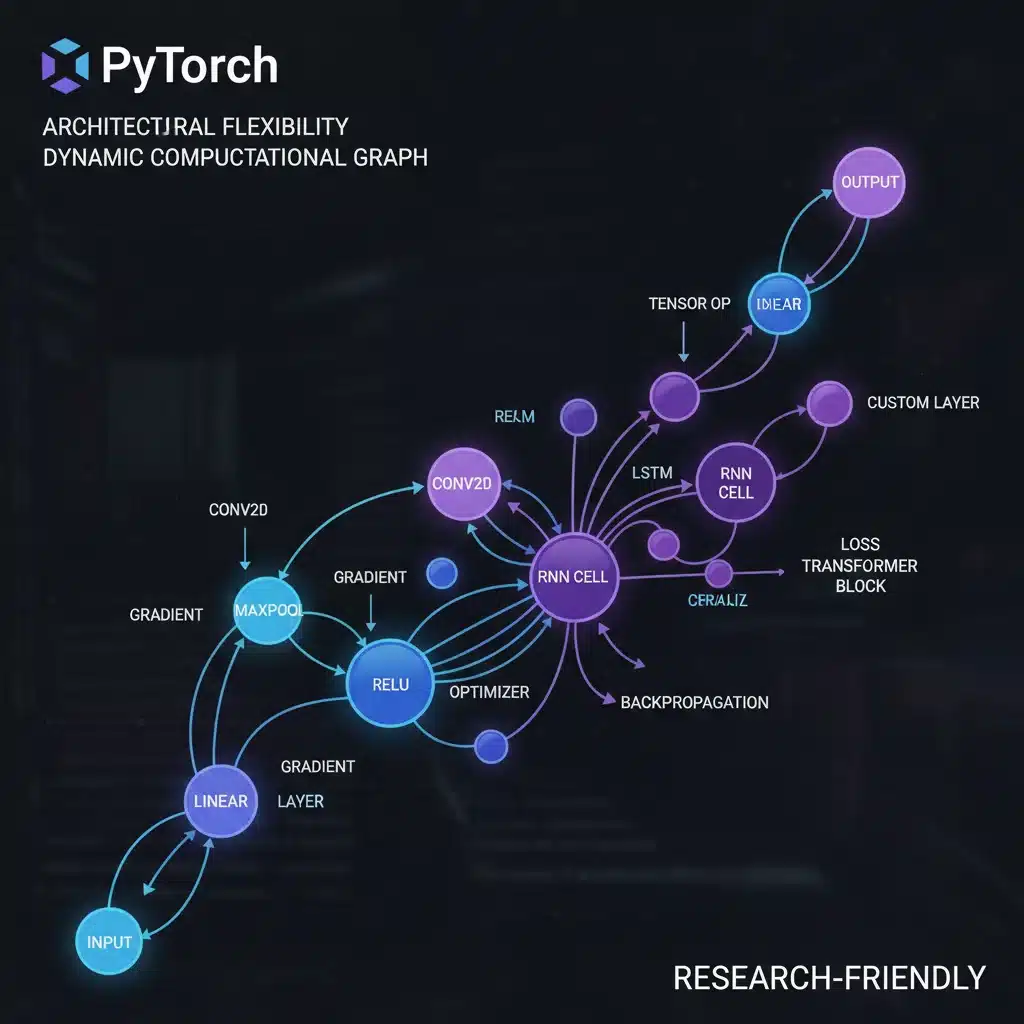

PyTorch, in 2026, has solidified its position as the preferred framework for cutting-edge research and development, even for projects that eventually scale to production. Its defining feature remains its dynamic computational graph, which allows for greater flexibility and easier debugging compared to TensorFlow’s traditional static graph approach (though TensorFlow has also moved towards dynamic execution with eager execution). This dynamic nature is particularly beneficial for complex models with variable input sizes, recurrent neural networks, and advanced research areas like reinforcement learning and generative AI.

Key Advantages of PyTorch for Large-Scale ML in the US:

- Dynamic Computational Graph: This remains PyTorch’s crown jewel. It enables imperative programming, making debugging intuitive and model construction highly flexible. For large-scale projects involving intricate architectures or novel research, this flexibility accelerates the development cycle significantly.

- Pythonic Interface: PyTorch feels very natural to Python developers, reducing the learning curve and allowing for more idiomatic code. This ease of use translates to faster prototyping and iteration, crucial for competitive R&D in the US tech sphere.

- Strong Community and Ecosystem Growth: The PyTorch community has grown exponentially, fostering a rich ecosystem of libraries and tools (e.g., PyTorch Lightning, Hugging Face Transformers, TorchServe). This vibrant community support is invaluable for troubleshooting, sharing best practices, and accessing pre-trained models for large-scale applications.

- Distributed Training Advancements: While initially seen as a TensorFlow stronghold, PyTorch has made massive strides in distributed training. Tools like

torch.distributedand PyTorch Lightning’s robust distributed training support make it highly capable for scaling models across multiple GPUs and machines, a necessity for large-scale ML in 2026. - Integration with Cloud AI Platforms: PyTorch’s integration with major cloud providers (AWS, Azure, GCP) has matured significantly. Services like AWS SageMaker, Azure Machine Learning, and Google Cloud’s Vertex AI now offer first-class support for PyTorch, simplifying the deployment and management of large-scale PyTorch models.

- TorchServe for Production Deployment: Meta’s TorchServe has emerged as a powerful, open-source tool for serving PyTorch models in production. It provides features like model versioning, multi-model serving, and A/B testing, addressing a key area where TensorFlow previously held a distinct advantage.

Considerations for PyTorch in 2026:

Despite its strengths, PyTorch still faces some considerations for enterprises focusing solely on production at scale. While TorchServe and other initiatives have closed the gap, TensorFlow’s end-to-end tooling for deployment, monitoring, and MLOps can still be perceived as more comprehensive and mature by some organizations. However, for large-scale research-driven projects or companies that prioritize rapid iteration and state-of-the-art model development, PyTorch is an exceptionally strong contender in 2026. The increasing adoption of PyTorch for large-scale machine learning in industry, not just research, testifies to its growing maturity and capability.

TensorFlow in 2026: Production Robustness and Enterprise Scale

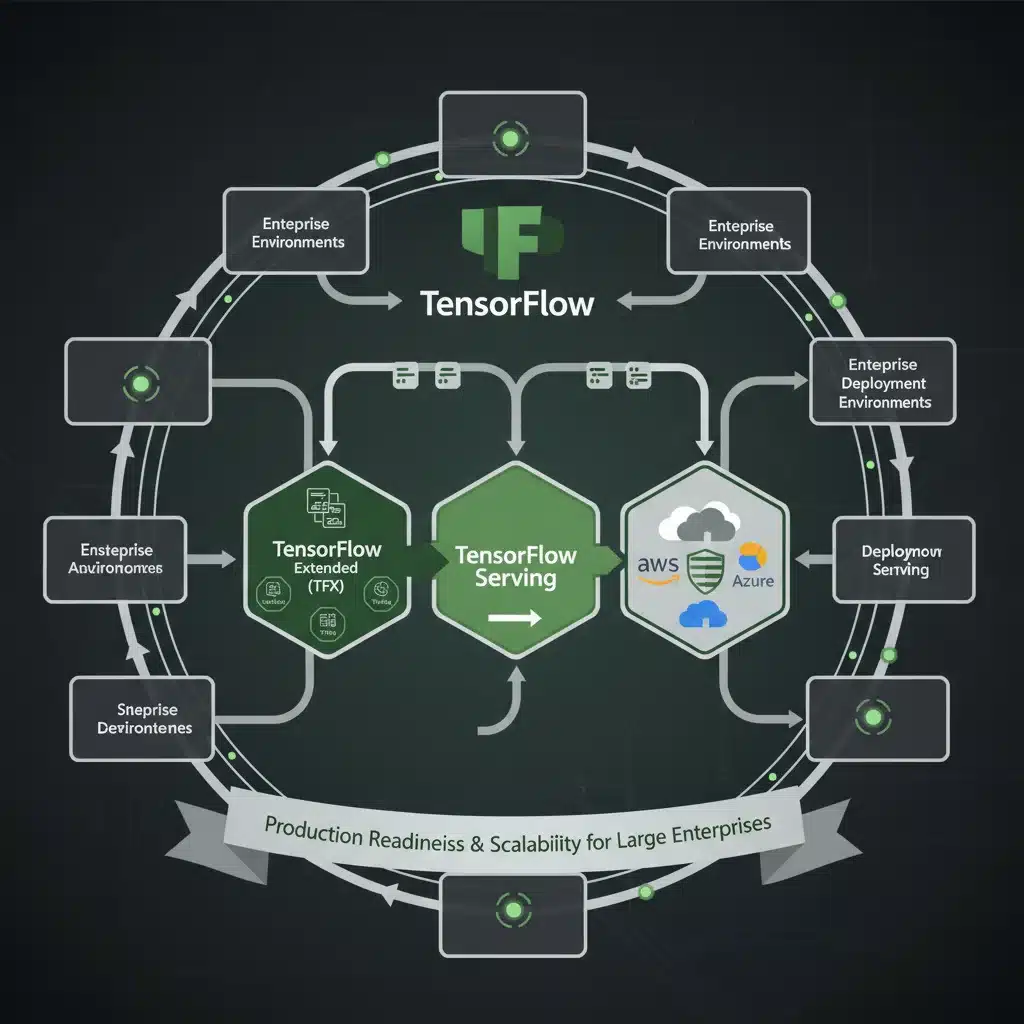

TensorFlow, as we see it in 2026, continues to be a dominant force, particularly in enterprise environments where robustness, scalability, and an end-to-end MLOps pipeline are paramount. Backed by Google, TensorFlow has always emphasized deployment and production readiness, offering a comprehensive suite of tools that cover the entire machine learning lifecycle. While PyTorch gained ground in research, TensorFlow has strategically evolved to incorporate features that appeal to researchers while doubling down on its strengths for large-scale, mission-critical deployments.

Key Strengths of TensorFlow for Large-Scale ML in the US:

- Comprehensive MLOps Ecosystem (TFX): TensorFlow Extended (TFX) provides a robust platform for building and managing ML pipelines at scale. It includes components for data validation, feature engineering, model training, evaluation, and serving, making it an unparalleled choice for organizations requiring strong governance and reproducibility in their large-scale ML operations.

- TensorFlow Serving and Lite: These components are crucial for deployment. TensorFlow Serving allows for high-performance, low-latency serving of models in production, while TensorFlow Lite enables on-device machine learning for mobile and IoT applications. This versatility is a significant advantage for companies targeting diverse deployment scenarios.

- Static and Eager Execution: While traditionally known for its static graph, TensorFlow has fully embraced eager execution, offering the best of both worlds. Developers can enjoy the flexibility and debugging ease of eager execution during development and then transition to a compiled static graph for optimized performance in production, especially beneficial for large-scale training.

- Scalability and Distributed Computing: TensorFlow has always excelled at distributed training, with mature support for various strategies (e.g., MirroredStrategy, MultiWorkerMirroredStrategy, ParameterServerStrategy). This makes it highly efficient for training massive models on large clusters, a common requirement for large-scale machine learning projects in the US.

- Cross-Platform Deployment: With TensorFlow.js for web deployment and TensorFlow Lite for mobile/edge, TensorFlow offers unparalleled capabilities for deploying models across a wide array of platforms, extending the reach of large-scale ML applications.

- Industry Adoption and Enterprise Support: Many large enterprises, especially those with existing Google Cloud infrastructure, have deeply integrated TensorFlow into their AI strategies. The extensive documentation, corporate backing, and long-term support make it a safe and reliable choice for critical large-scale projects.

Considerations for TensorFlow in 2026:

While TensorFlow’s ecosystem is undeniably powerful, its steep learning curve for beginners and its sometimes-verbose API can be a deterrent for new entrants or smaller teams prioritizing rapid experimentation. However, for established organizations embarking on large-scale machine learning deployments, the comprehensive tooling and production-readiness of TensorFlow often outweigh these initial challenges. The framework’s continuous evolution, particularly in improving user experience and integrating with modern ML practices, solidifies its position as a top contender for enterprise-grade AI in 2026.

PyTorch vs TensorFlow 2026: A Direct Comparison for Large-Scale ML

To provide a clearer picture for decision-makers in the US, let’s conduct a direct comparison of PyTorch vs TensorFlow 2026 across several critical dimensions relevant to large-scale machine learning projects:

1. Ease of Use and API Design:

- PyTorch: Generally considered more Pythonic and intuitive. Its imperative programming style and dynamic graphs make it easier to learn, debug, and prototype. This is a significant advantage for researchers and smaller teams needing fast iteration.

- TensorFlow: Historically had a steeper learning curve due to its static graph paradigm. However, with eager execution and Keras as its high-level API, it has become much more user-friendly. Still, its comprehensive API can feel more verbose for simple tasks.

- Verdict for Large-Scale ML: For initial model development and research, PyTorch often wins on ease of use. For robust, standardized enterprise pipelines, TensorFlow’s structured approach with Keras and TFX can be more beneficial in the long run.

2. Flexibility and Research Capabilities:

- PyTorch: Excels in flexibility due to its dynamic computational graph. This is ideal for cutting-edge research, models with dynamic architectures, and scenarios where immediate feedback during development is crucial. Many state-of-the-art models are initially developed in PyTorch.

- TensorFlow: While capable of dynamic execution, its core strength lies in optimizing static graphs for performance. It’s highly flexible for a wide range of models but might require more boilerplate for highly experimental architectures compared to PyTorch.

- Verdict for Large-Scale ML: PyTorch generally holds an edge for pure research and rapid prototyping of novel large-scale models.

3. Production Readiness and Deployment:

- PyTorch: Has significantly matured with TorchServe and better cloud integrations. It is increasingly used in production, especially for models developed with its research-first approach.

- TensorFlow: Historically the leader here, with TensorFlow Serving, TFX, and broad enterprise adoption. Its ecosystem is designed from the ground up for robust, scalable, and maintainable production deployments, making it a strong choice for organizations requiring stringent MLOps practices for their large-scale systems.

- Verdict for Large-Scale ML: TensorFlow still has a slight lead in terms of a fully mature, end-to-end MLOps and deployment ecosystem for highly regulated or mission-critical large-scale systems.

4. Scalability and Distributed Training:

- PyTorch: Has made significant advancements, offering powerful tools for distributed training across multiple GPUs and nodes. PyTorch Lightning further simplifies this process.

- TensorFlow: Has a long-standing reputation for excellent distributed training capabilities, handling large-scale computations efficiently across clusters. Its strategies are well-documented and robust.

- Verdict for Large-Scale ML: Both frameworks are highly capable of handling large-scale distributed training in 2026. The choice often comes down to team familiarity and existing infrastructure.

5. Community and Ecosystem:

- PyTorch: Boasts a rapidly growing and highly active community, particularly strong in academia and research. Many new papers and open-source projects are released in PyTorch.

- TensorFlow: Has a massive, well-established community with extensive resources, tutorials, and corporate support from Google. Its ecosystem is vast and mature, covering almost every aspect of ML.

- Verdict for Large-Scale ML: Both have vibrant communities, but PyTorch’s growth rate is impressive, while TensorFlow’s maturity offers stability and a wealth of existing solutions.

6. Cloud Integration:

- PyTorch: Excellent integration with major cloud platforms (AWS, Azure, GCP), with increasing native support and optimized services.

- TensorFlow: Naturally deeply integrated with Google Cloud Platform (GCP) and Vertex AI, offering seamless workflows. Also well-supported on other cloud platforms.

- Verdict for Large-Scale ML: Both offer strong cloud integration; the choice might depend on your primary cloud provider and existing infrastructure.

Choosing Between PyTorch and TensorFlow 2026 for Your US Project

The decision between PyTorch and TensorFlow 2026 is rarely black and white for large-scale machine learning projects. It often boils down to the specific context, team expertise, and the stage of your project lifecycle. Here are some guiding principles for organizations in the US:

Opt for PyTorch if:

- Your project heavily involves cutting-edge research, novel model architectures, or highly experimental AI.

- Your team prioritizes rapid prototyping, quick iteration, and ease of debugging.

- You need maximum flexibility in model design and are working with dynamic computational requirements.

- Your team has a strong Python background and appreciates a more ‘Pythonic’ development experience.

- You are leveraging state-of-the-art models from the research community, as many are initially implemented in PyTorch.

- You are comfortable with a slightly less opinionated MLOps pipeline and prefer building custom deployment solutions using tools like TorchServe.

Opt for TensorFlow if:

- Your primary goal is robust, scalable, and maintainable production deployment of large-scale models.

- You require a comprehensive, end-to-end MLOps platform (like TFX) for governance, reproducibility, and automation.

- Your organization has existing infrastructure heavily invested in Google Cloud Platform or relies on TensorFlow’s specific deployment tools (TensorFlow Serving, Lite, JS).

- You are working on projects requiring on-device inference (mobile, IoT) or web deployment.

- Your team prefers a more structured and opinionated framework for large-scale enterprise applications.

- You need long-term stability and corporate backing for mission-critical AI systems.

The Future of PyTorch and TensorFlow in the US ML Landscape

Looking ahead to the rest of 2026 and beyond, both PyTorch and TensorFlow are expected to continue their impressive growth and evolution. The trend of convergence, where each framework adopts features and best practices from the other, is likely to persist. TensorFlow will continue to enhance its research capabilities and ease of use, while PyTorch will further strengthen its production-readiness and MLOps tooling.

In the US, the demand for skilled professionals in both frameworks will remain high. Companies are increasingly adopting multi-framework strategies, leveraging PyTorch for R&D and TensorFlow for production, or even using both within different departments or for different project types. The rise of intermediate representations like ONNX (Open Neural Network Exchange) also facilitates interoperability, allowing models trained in one framework to be deployed in another, blurring the lines further.

Ultimately, the choice between PyTorch and TensorFlow 2026 for large-scale machine learning projects in the US will depend less on a definitive ‘winner’ and more on a careful assessment of project-specific needs, team expertise, and strategic alignment. Both frameworks are exceptionally powerful, and their continued development ensures that the US tech industry has access to world-class tools to drive the next wave of AI innovation.

Conclusion

The debate of PyTorch vs TensorFlow 2026 for large-scale machine learning projects in the US is not about finding a universally superior tool, but rather about identifying the optimal fit for specific challenges and environments. PyTorch shines in its flexibility, ease of use for researchers, and rapid prototyping capabilities, making it ideal for pioneering new AI models. TensorFlow, with its robust MLOps ecosystem, comprehensive deployment tools, and strong enterprise support, remains the powerhouse for production-grade, scalable AI systems. Both frameworks have evolved significantly, addressing their former weaknesses and offering powerful solutions for distributed training, cloud integration, and community support.

As organizations in the US continue to push the boundaries of AI, understanding the distinct philosophies and strengths of each framework will be crucial. The best approach often involves a pragmatic evaluation, considering factors like team proficiency, project phase (research vs. production), existing infrastructure, and long-term maintenance goals. By making an informed decision, companies can harness the full potential of either PyTorch or TensorFlow 2026 to build and deploy groundbreaking large-scale machine learning applications that drive innovation and competitive advantage in the dynamic US market.