2026 AI Tool Compliance: Data Privacy in the US

Navigating 2026 AI Tool Updates: Insider Knowledge on Compliance for Data Privacy in the United States

The year 2026 is rapidly approaching, bringing with it a pivotal shift in the landscape of artificial intelligence. For businesses operating within the United States, understanding and preparing for these changes, particularly concerning data privacy, is not merely advisable but absolutely critical. The rapid evolution of AI technologies has outpaced traditional regulatory frameworks, leading to a scramble among lawmakers to establish robust guidelines that protect individual rights while fostering innovation. This comprehensive guide delves into the anticipated AI Compliance 2026 updates, offering insider knowledge to help organizations proactively adapt and thrive in this new regulatory environment. We’ll explore the key legislative drivers, the potential impact on various industries, and actionable strategies for ensuring your AI tools remain compliant with emerging data privacy standards.

The Evolving Regulatory Landscape for AI in the US

The United States, unlike the European Union with its comprehensive General Data Protection Regulation (GDPR), has historically adopted a sector-specific approach to data privacy. However, the proliferation of AI and its profound implications for data collection, processing, and decision-making have spurred a more unified and urgent response. Several key pieces of legislation and proposed frameworks are shaping the discussion around AI Compliance 2026.

Key Legislative Drivers and Proposals

- State-Level Privacy Laws: States like California (CCPA/CPRA), Virginia (VCDPA), Colorado (CPA), Utah (UCPA), and Connecticut (CTDPA) have already established robust data privacy laws. While not exclusively focused on AI, these laws lay the groundwork for individual data rights, including access, deletion, and opt-out, which directly impact how AI systems handle personal information. We anticipate further harmonization and expansion of these state-level protections, potentially leading to a de facto national standard.

- National AI Initiatives: The Biden administration has taken significant steps to address AI regulation, issuing an Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence. This order emphasizes responsible AI development, data privacy, and algorithmic transparency. While an Executive Order doesn’t create new law, it signals the administration’s priorities and encourages federal agencies to develop specific guidelines that will influence future legislation.

- Proposed Federal Legislation: Several federal bills addressing AI and data privacy are under consideration in Congress. These proposals often focus on areas like algorithmic bias, data security, transparency in AI decision-making, and accountability for AI-driven harms. While the exact form of federal legislation by 2026 remains uncertain, the direction is clear: increased scrutiny and regulation of AI’s impact on data privacy.

- NIST AI Risk Management Framework: The National Institute of Standards and Technology (NIST) has released its AI Risk Management Framework (AI RMF), providing voluntary guidance for managing risks associated with AI. While voluntary, this framework is likely to become a de facto standard that regulators and courts will reference when assessing responsible AI practices. Adhering to the AI RMF can significantly bolster an organization’s position regarding AI Compliance 2026.

The convergence of these state, federal, and guidance initiatives creates a complex, yet predictable, trajectory towards more stringent AI Compliance 2026 requirements. Organizations that begin their preparation now will be in a much stronger position to adapt.

Data Privacy at the Core of AI Compliance

At the heart of AI Compliance 2026 is the principle of data privacy. AI systems are inherently data-hungry, relying on vast quantities of information to learn, predict, and automate. This reliance raises critical questions about how personal data is collected, stored, processed, and used. Future regulations will increasingly focus on:

Consent and Transparency

The days of opaque data collection practices are rapidly fading. By 2026, organizations will likely face more rigorous requirements for obtaining explicit, informed consent from individuals whose data is used to train or operate AI systems. This means clear, concise, and understandable explanations of:

- What data is being collected: Specific categories of personal information.

- How it will be used: The precise purposes for which AI will process the data.

- Who will have access: Any third parties or internal departments.

- How long it will be retained: Data retention policies.

- The right to withdraw consent: Easy mechanisms for individuals to opt-out.

Transparency will extend to the very functioning of AI. While ‘explainable AI‘ (XAI) is still an emerging field, regulators will increasingly demand that businesses can articulate how their AI models arrive at certain decisions, especially when those decisions have significant impacts on individuals (e.g., credit scores, employment applications, healthcare diagnoses). This level of transparency is crucial for building trust and demonstrating accountability in AI Compliance 2026.

Data Minimization and Purpose Limitation

A core tenet of modern data privacy is data minimization – collecting only the data absolutely necessary for a specified purpose. This principle will be heavily enforced in AI Compliance 2026. Organizations must be able to justify why specific data points are required for their AI models and avoid collecting extraneous information. Similarly, purpose limitation dictates that data collected for one purpose should not be repurposed for another without renewed consent or a clear legal basis.

Data Security and Anonymization

The security of data feeding AI systems is paramount. Breaches involving AI-processed data can have magnified impacts due to the interconnectedness and scale of AI operations. Robust cybersecurity measures, including encryption, access controls, and regular security audits, will be non-negotiable. Furthermore, techniques like anonymization and pseudonymization will become increasingly important. While true anonymization (where data cannot be linked back to an individual) is challenging, regulators will expect organizations to employ the strongest possible methods to protect individual identities within AI datasets.

Individual Rights and Automated Decision-Making

Existing privacy laws grant individuals rights over their personal data. By 2026, these rights will be explicitly extended and strengthened in the context of AI. This includes:

- Right to Access: Individuals can request information about what data an AI system holds about them.

- Right to Correction: The ability to rectify inaccurate data.

- Right to Deletion (Right to Be Forgotten): The right to have personal data removed from AI training sets and operational systems, where feasible and legally permissible.

- Right to Opt-Out of Automated Decision-Making: The right to not be subject to a decision based solely on automated processing, including profiling, if it produces legal effects or similarly significant effects concerning them. This is a particularly challenging area for AI, requiring human oversight or alternative processes.

- Right to Explanation: The right to understand the rationale behind an AI’s decision, especially if it’s adverse.

Implementing mechanisms to honor these rights within complex AI architectures will be a significant challenge for businesses striving for AI Compliance 2026.

Impact Across Industries: Who Needs to Pay Attention?

While AI Compliance 2026 will have a broad impact, certain industries, due to their extensive use of personal data or reliance on critical AI applications, will face heightened scrutiny:

Healthcare and Life Sciences

AI in healthcare (e.g., diagnostics, drug discovery, personalized medicine) uses highly sensitive personal health information (PHI). Compliance with HIPAA will remain foundational, but new AI-specific regulations will likely add layers of requirements regarding algorithmic bias in diagnoses, data sharing with AI vendors, and the explainability of clinical AI decisions. The stakes are incredibly high, making robust AI Compliance 2026 essential.

Financial Services

AI is pervasive in finance, from fraud detection and credit scoring to algorithmic trading and customer service. Concerns about algorithmic bias leading to discriminatory lending practices, data security in financial transactions, and the transparency of AI-driven investment advice will drive new compliance mandates. Organizations must demonstrate fair and unbiased AI systems to meet AI Compliance 2026 standards.

Retail and E-commerce

Personalized recommendations, dynamic pricing, and targeted advertising all rely heavily on AI and vast consumer data. New regulations will likely focus on transparent data collection practices, granular consent for personalization, and protection against manipulative AI practices. The ability to manage and protect customer data ethically will be key to maintaining consumer trust and achieving AI Compliance 2026.

Human Resources and Employment

AI is increasingly used in recruitment, employee monitoring, and performance evaluations. This raises significant concerns about algorithmic bias in hiring, fairness in performance assessments, and the privacy of employee data. Regulations will aim to ensure that AI tools do not perpetuate or exacerbate discrimination and that employees retain privacy rights in the workplace. Proactive steps towards ethical AI in HR will be vital for AI Compliance 2026.

Strategies for Ensuring AI Compliance by 2026

Preparing for AI Compliance 2026 requires a multi-faceted approach that integrates legal, technical, and ethical considerations. Here are actionable strategies for businesses:

1. Conduct a Comprehensive AI Audit

The first step is to understand your current AI landscape. Inventory all AI tools and systems in use, both internally developed and third-party solutions. For each system, document:

- Data Sources: Where does the data come from? Is it personal data?

- Data Types: What categories of data are being processed?

- Purpose of Use: What is the AI designed to achieve?

- Data Flow: How is data collected, stored, processed, and shared?

- Decision-Making Process: How does the AI arrive at its conclusions?

- Risk Assessment: What are the potential privacy, security, and ethical risks associated with each AI system?

This audit will provide a baseline for identifying areas of non-compliance and prioritizing remediation efforts for AI Compliance 2026.

2. Establish an AI Governance Framework

A robust AI governance framework is essential. This framework should define:

- Roles and Responsibilities: Who is accountable for AI ethics, data privacy, and compliance? This might include a Chief AI Ethics Officer or a dedicated AI governance committee.

- Policy Development: Create clear internal policies for AI development, deployment, and use, covering data privacy, algorithmic fairness, transparency, and accountability.

- Risk Management: Implement processes for continuous identification, assessment, and mitigation of AI-related risks.

- Ethical Guidelines: Develop and embed ethical principles into your AI development lifecycle.

This framework will serve as your organizational blueprint for achieving and maintaining AI Compliance 2026.

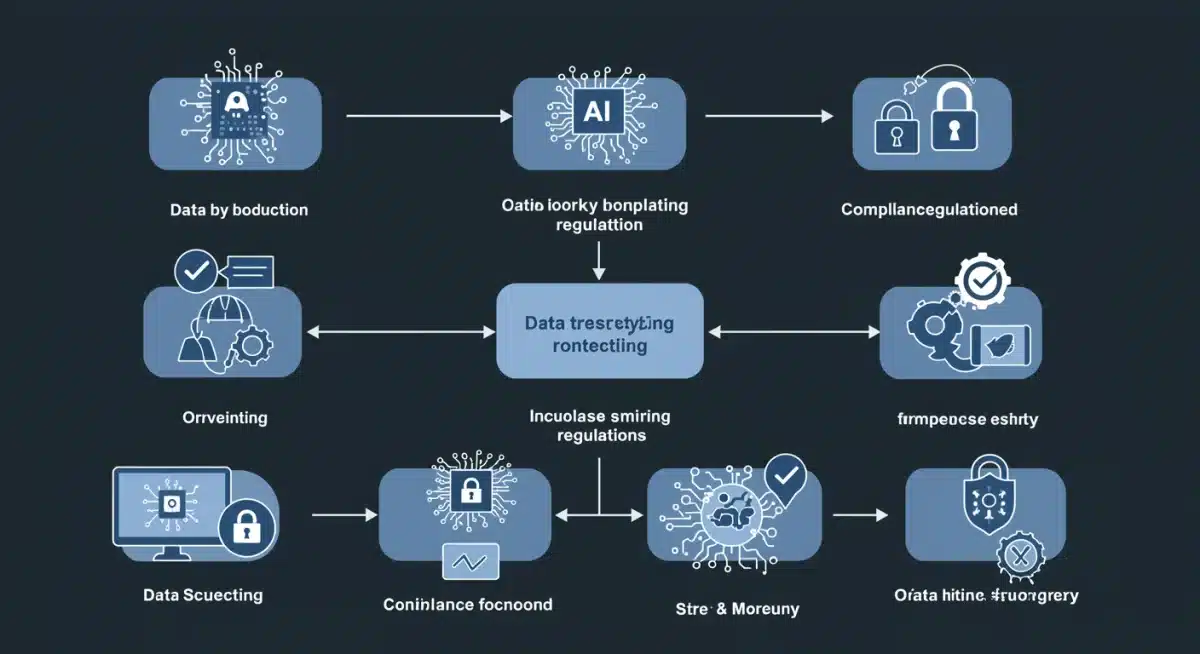

3. Prioritize Data Privacy by Design and Default

Integrate privacy considerations from the very outset of AI system design (Privacy by Design). This means:

- Data Minimization: Engineer systems to collect only essential data.

- Anonymization/Pseudonymization: Implement these techniques wherever possible.

- Security Measures: Build in robust security from the ground up.

- Consent Mechanisms: Design user interfaces that facilitate clear, informed consent.

- Impact Assessments: Conduct Data Protection Impact Assessments (DPIAs) or AI Impact Assessments (AIIAs) for new AI initiatives.

By making privacy a default setting, you significantly reduce future compliance burdens for AI Compliance 2026.

4. Invest in Explainable AI (XAI) and Transparency Tools

While full explainability is a complex technical challenge, businesses should invest in tools and methodologies that enhance the transparency of their AI systems. This includes:

- Model Documentation: Thoroughly document AI models, including training data, assumptions, and decision logic.

- Feature Importance Analysis: Understand which data inputs most influence AI outputs.

- User-Facing Explanations: Develop ways to communicate AI decisions to users in a clear and understandable manner, especially for automated decisions.

- Bias Detection and Mitigation: Implement tools to identify and address algorithmic bias in training data and model outputs.

These efforts will be crucial for meeting transparency demands under AI Compliance 2026.

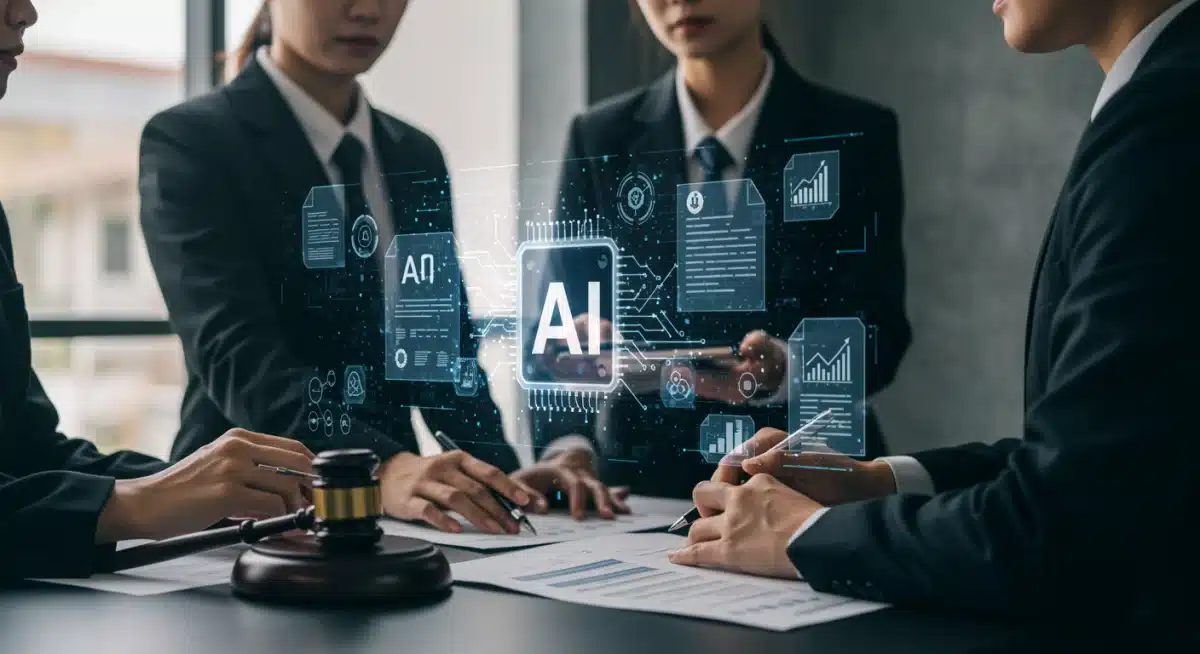

5. Foster Cross-Functional Collaboration

AI Compliance 2026 is not solely an IT or legal issue. It requires collaboration across departments:

- Legal Counsel: To interpret regulations and advise on compliance strategy.

- Data Scientists/Engineers: To implement privacy-by-design principles and develop explainable AI.

- Product Development: To integrate compliance requirements into new AI products and features.

- Ethics Committee: To guide responsible AI development and deployment.

- Security Teams: To ensure robust data protection.

Breaking down silos and encouraging dialogue will be critical for a holistic approach to AI Compliance 2026.

6. Stay Informed and Adapt

The regulatory landscape for AI is dynamic. What’s true today may evolve by 2026. Businesses must commit to continuous monitoring of legislative developments, industry best practices, and emerging technologies. Engage with industry associations, regulatory bodies, and legal experts to stay ahead of the curve. Regular reviews of your AI governance framework and compliance strategies will be necessary to adapt to new requirements for AI Compliance 2026.

The Costs of Non-Compliance

The penalties for failing to meet AI Compliance 2026 standards can be severe, extending beyond monetary fines. These include:

- Significant Fines: State and federal regulators are likely to impose substantial financial penalties, potentially reaching millions of dollars, similar to those seen under GDPR.

- Reputational Damage: Data breaches or ethical missteps involving AI can severely damage a company’s brand, leading to loss of customer trust and market share.

- Legal Action: Non-compliance can result in class-action lawsuits from affected individuals and enforcement actions from regulatory agencies.

- Operational Disruption: Remediation efforts after a compliance failure can be costly and divert resources from core business activities.

- Loss of Competitive Advantage: Companies that fall behind on compliance may find it harder to innovate or gain market access for their AI products.

The proactive investment in AI Compliance 2026 far outweighs the potential costs and risks of non-compliance.

Conclusion: Preparing for an AI-Regulated Future

The journey towards AI Compliance 2026 is not a sprint but a marathon. It demands foresight, strategic planning, and a commitment to ethical AI development and data privacy. The insights provided here underscore the urgency and complexity of the task at hand. By understanding the evolving regulatory landscape, prioritizing data privacy, establishing robust governance frameworks, and fostering cross-functional collaboration, businesses can confidently navigate the upcoming changes. Proactive preparation will not only ensure compliance but also build greater trust with customers, enhance brand reputation, and ultimately drive sustainable innovation in the age of artificial intelligence. The future of AI in the United States is one where innovation and regulation must go hand-in-hand, and organizations that embrace this reality early will be the ones that lead the way.