Navigating 2026 AI Regulations: A Business Leader’s Guide to U.S. Compliance and Avoiding 15% Fines (RECENT UPDATES)

Navigating 2026 AI Regulations: A Business Leader’s Guide to U.S. Compliance and Avoiding 15% Fines (RECENT UPDATES)

The rapid evolution of Artificial Intelligence (AI) has ushered in an era of unprecedented innovation, transforming industries and reshaping how businesses operate. However, this technological leap also brings with it complex ethical, legal, and societal challenges. In response, governments worldwide are scrambling to establish regulatory frameworks to ensure responsible AI development and deployment. For business leaders in the United States, understanding and preparing for the impending AI Regulations 2026 is not just a matter of good governance, but a critical imperative to avoid significant financial penalties and reputational damage.

The landscape of AI regulation is fluid and multifaceted, with various federal and state bodies proposing and enacting different rules. This guide aims to demystify the upcoming U.S. AI regulatory environment, providing a strategic roadmap for business leaders to navigate compliance, mitigate risks, and leverage AI responsibly. We’ll delve into the key legislative trends, highlight recent updates, and outline actionable steps to ensure your organization is not only compliant but also positioned for success in an AI-driven future.

The Urgent Need for AI Regulations: Why 2026 is a Pivotal Year

The year 2026 is emerging as a critical juncture for AI regulation in the U.S. This is largely due to the accelerating pace of AI adoption across sectors, from healthcare and finance to manufacturing and retail. As AI systems become more sophisticated and autonomous, concerns about data privacy, algorithmic bias, transparency, accountability, and job displacement have grown. These concerns are not merely theoretical; real-world instances of biased algorithms, data breaches, and ethical dilemmas have underscored the need for robust oversight.

Without clear guidelines, businesses face uncertainty, consumers lack protection, and the potential for AI misuse escalates. The U.S. government, through various agencies and legislative initiatives, is working to strike a balance: fostering innovation while safeguarding public interest. This dual objective is at the heart of the emerging AI Regulations 2026. Companies that fail to adapt risk not only hefty fines, potentially up to 15% of global revenue in some scenarios, but also a significant erosion of customer trust and brand value.

Understanding the impetus behind these regulations is the first step towards effective compliance. It’s about recognizing that AI is no longer just a technological tool; it’s a societal force that requires careful stewardship.

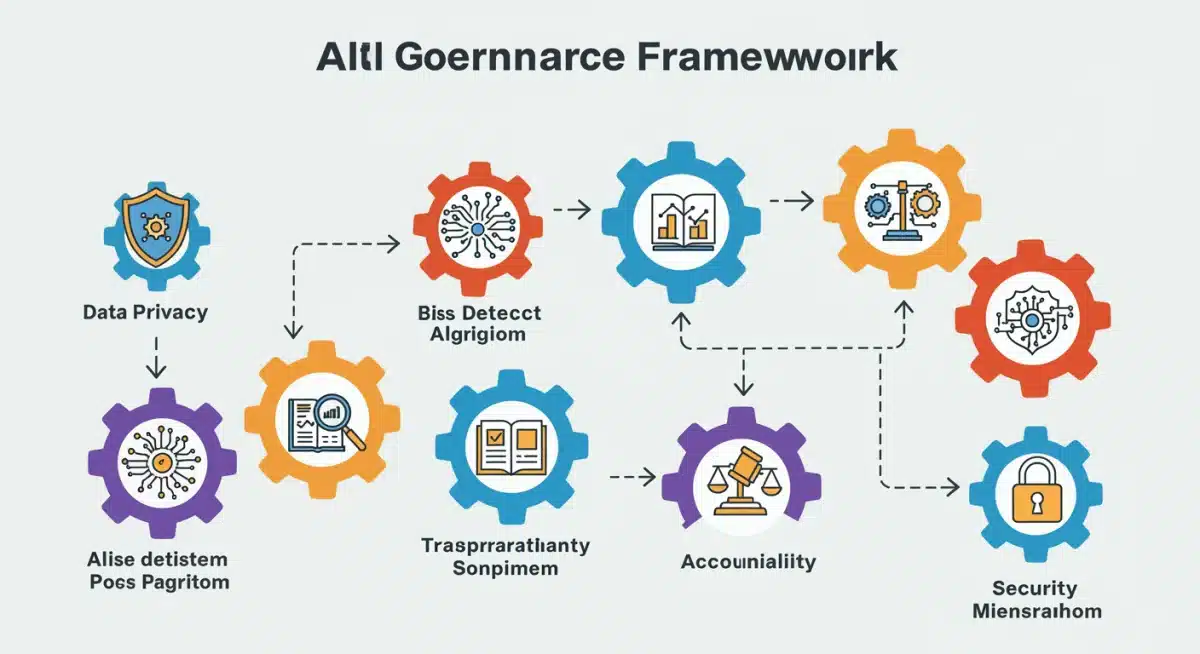

Key Pillars of U.S. AI Regulatory Frameworks

While a single, overarching federal AI law akin to Europe’s AI Act has yet to materialize in the U.S., a mosaic of regulations, guidelines, and proposed legislation is forming. Business leaders must monitor developments across several key areas:

1. Data Privacy and Security

At the core of many AI applications is data. The collection, processing, and storage of personal data by AI systems are under intense scrutiny. Existing laws like the California Consumer Privacy Act (CCPA) and its successor, the California Privacy Rights Act (CPRA), serve as precursors to broader privacy protection. Federal efforts, while still debated, are likely to impose stricter requirements on how AI systems handle sensitive information. Businesses leveraging AI must implement robust data governance strategies, ensuring transparency in data collection, obtaining explicit consent, and safeguarding against breaches. Non-compliance here can lead to significant penalties, as seen with general data privacy violations.

2. Algorithmic Bias and Fairness

One of the most pressing ethical concerns in AI is algorithmic bias. If AI models are trained on biased data or designed with flawed assumptions, they can perpetuate and even amplify existing societal inequalities in areas like hiring, lending, healthcare, and criminal justice. The White House’s ‘Blueprint for an AI Bill of Rights’ and the National Institute of Standards and Technology (NIST) AI Risk Management Framework specifically address fairness and non-discrimination. Future AI Regulations 2026 are expected to mandate regular audits for bias, require documentation of fairness metrics, and potentially impose liability for discriminatory outcomes. Companies must invest in diverse datasets, employ bias detection tools, and establish human oversight mechanisms to ensure equitable AI outputs.

3. Transparency and Explainability (XAI)

The ‘black box’ nature of some advanced AI models makes it difficult to understand how they arrive at specific decisions. This lack of transparency can be problematic, especially in critical applications. Regulators are pushing for greater explainability (XAI), requiring businesses to provide clear, understandable explanations for AI-driven decisions, particularly when those decisions impact individuals. This could involve documenting model architecture, training data, and decision-making logic. For businesses, this means developing AI systems with built-in interpretability features and being prepared to articulate their AI processes to regulators and affected parties.

4. Accountability and Governance

Who is responsible when an AI system makes a mistake or causes harm? This question is central to accountability frameworks. Upcoming regulations will likely establish clear lines of responsibility within organizations for AI development, deployment, and monitoring. This includes designating AI governance teams, implementing internal policies and procedures, and conducting regular risk assessments. The concept of ‘human in the loop’ or ‘human on the loop’ will be crucial, ensuring that humans retain ultimate control and oversight over critical AI decisions. Establishing a robust AI governance framework now will be essential for navigating the AI Regulations 2026.

5. Sector-Specific Regulations

Beyond general principles, various sectors are seeing tailored AI regulations. For instance, the financial industry is under scrutiny from agencies like the CFPB and OCC regarding AI’s use in credit scoring and fraud detection. Healthcare AI is subject to HIPAA and FDA guidelines, focusing on patient safety and data privacy. Transportation, particularly autonomous vehicles, is another area where specific rules are rapidly evolving. Businesses must not only adhere to general AI principles but also stay abreast of their industry-specific regulatory landscape.

Recent Updates and Emerging Trends in U.S. AI Policy

The U.S. AI regulatory environment is highly dynamic. Several key developments signal the direction of future AI Regulations 2026:

- Executive Order on Safe, Secure, and Trustworthy AI (October 2023): This landmark executive order laid out a comprehensive strategy for AI regulation, directing federal agencies to set new standards for AI safety and security, protect privacy, advance equity, and promote competition. It emphasizes the need for developers of powerful AI systems to share safety test results with the government and outlines measures to combat AI-enabled fraud.

- NIST AI Risk Management Framework (AI RMF): Published in early 2023, the AI RMF provides voluntary guidance for organizations to manage risks associated with AI. While voluntary, it’s increasingly seen as a de facto standard and could form the basis for future mandatory requirements. Businesses should align their AI risk management practices with NIST’s recommendations.

- State-Level Initiatives: States like California, New York, and Colorado are actively pursuing their own AI legislation, often focusing on specific issues like AI in employment decisions or consumer protection. These state-level efforts can create a patchwork of regulations that businesses operating nationally must navigate.

- Congressional Debates: Congress continues to hold hearings and propose various bills, though consensus on a federal AI law remains elusive. Key areas of debate include the scope of federal oversight, liability for AI systems, and the allocation of resources for AI research and development.

- Focus on Generative AI: The rise of powerful generative AI models has brought new challenges, including concerns about deepfakes, copyright infringement, and misinformation. Future regulations are likely to address these issues, potentially requiring watermarking of AI-generated content or mandating disclosure.

Staying informed about these ongoing developments is crucial. The regulatory landscape is a moving target, and proactive engagement is key to successful compliance with AI Regulations 2026.

The Cost of Non-Compliance: Understanding the 15% Fine Threat

The potential financial penalties for non-compliance with future AI Regulations 2026 are a significant concern for business leaders. While no single federal AI law currently specifies a 15% fine on global revenue, this figure is a powerful indicator of the severity regulators are considering, drawing parallels to GDPR’s stringent penalties in Europe. Let’s break down where such a threat originates and why it’s a realistic possibility:

Precedent from Data Privacy Laws

The European Union’s General Data Protection Regulation (GDPR) sets a precedent with fines up to €20 million or 4% of annual global turnover, whichever is higher, for serious infringements. Similarly, the California Privacy Rights Act (CPRA) allows for significant fines per violation. As AI regulations often intersect with data privacy, it’s highly probable that future U.S. AI laws will adopt a similar, robust penalty structure to ensure deterrence and compliance.

The Scope of Harm

AI systems, particularly those operating at scale, have the potential to cause widespread harm – from systemic discrimination and financial loss to privacy breaches affecting millions. Regulators are acutely aware of this potential. To truly incentivize responsible AI development and deployment, penalties must be substantial enough to outweigh the perceived benefits of cutting corners on compliance. A 15% fine, therefore, reflects the perceived magnitude of risk and the desire to create a strong deterrent against irresponsible AI practices.

Reputational Damage and Litigation

Beyond direct fines, non-compliance carries immense reputational risks. Public outcry over biased AI, privacy violations, or ethical breaches can severely damage a company’s brand, erode customer trust, and lead to boycotts. Furthermore, the likelihood of class-action lawsuits and other litigation from affected individuals or groups will increase, adding further financial and legal burdens. The total cost of non-compliance far exceeds just regulatory fines.

Enforcement Trends

U.S. agencies like the Federal Trade Commission (FTC) are already signaling a proactive stance on AI. The FTC has warned against deceptive AI practices and has the authority to enforce existing consumer protection laws against companies misusing AI. As specific AI regulations come into force, the enforcement actions are expected to become more targeted and impactful, with higher penalty ceilings.

Therefore, while ‘15% of global revenue’ might be a projected or illustrative figure based on current regulatory trends and discussions, the underlying message is clear: the financial stakes for AI Regulations 2026 compliance will be exceptionally high. Businesses must prepare for penalties that can significantly impact their bottom line, making proactive compliance an economic necessity.

A Strategic Roadmap for AI Compliance: Actionable Steps for Business Leaders

Preparing for AI Regulations 2026 requires a proactive and integrated approach. Here’s a strategic roadmap for business leaders:

1. Establish an AI Governance Framework

- Cross-Functional Committee: Form a dedicated AI governance committee comprising legal, ethics, technical, and business leaders. This committee will be responsible for setting internal policies, monitoring compliance, and responding to regulatory changes.

- Ethical AI Principles: Develop and embed clear ethical AI principles into your organizational culture. These principles should guide all stages of AI development and deployment, from design to monitoring.

- Policy Development: Create comprehensive internal policies covering data privacy, algorithmic fairness, transparency, and accountability for all AI systems.

2. Conduct AI Risk Assessments and Audits

- Inventory AI Systems: Catalogue all AI systems currently in use or under development within your organization. Understand their purpose, data sources, and potential impact.

- Risk Mapping: For each AI system, identify potential risks related to bias, privacy, security, and ethical implications. Utilize frameworks like the NIST AI RMF for structured assessment.

- Regular Audits: Implement a schedule for regular internal and external audits of AI systems to assess performance, identify biases, and ensure compliance with internal policies and evolving regulations.

3. Prioritize Data Governance and Security

- Data Inventory and Lineage: Maintain a clear inventory of all data used to train and operate AI models, including its source, purpose, and usage rights.

- Data Quality and Bias Detection: Implement processes to assess and improve data quality, and actively detect and mitigate biases in training datasets.

- Enhanced Security Measures: Strengthen cybersecurity measures around AI systems and data pipelines to prevent unauthorized access, manipulation, or breaches.

- Privacy-Enhancing Technologies (PETs): Explore and implement PETs such as differential privacy or homomorphic encryption to protect sensitive data used by AI.

4. Foster Transparency and Explainability

- Documentation: Maintain detailed documentation for all AI models, including design choices, training data, performance metrics, and decision-making logic.

- Explainable AI (XAI) Tools: Invest in and integrate XAI tools where appropriate to provide clear explanations for AI-driven decisions, especially in high-stakes applications.

- User Communication: Develop clear communication strategies to inform users when they are interacting with AI systems and explain how AI decisions are made, particularly when those decisions affect them directly.

5. Invest in Training and Talent Development

- Employee Training: Educate employees across all relevant departments (legal, IT, product development, HR) on AI ethics, responsible AI practices, and regulatory requirements.

- Expertise Development: Invest in developing internal expertise in AI ethics, governance, and compliance. Consider hiring AI ethicists or legal professionals specializing in AI law.

6. Monitor Regulatory Landscape and Engage Stakeholders

- Continuous Monitoring: Assign dedicated resources to continuously monitor legislative developments at federal and state levels, as well as international AI regulatory trends.

- Industry Engagement: Participate in industry forums, consortia, and public consultations related to AI regulation. Your voice can help shape future policies.

- Legal Counsel: Work closely with legal counsel specializing in technology law to interpret regulations and ensure compliance.

Beyond Compliance: Building Trust and Competitive Advantage

While avoiding fines and legal repercussions is a primary driver for compliance with AI Regulations 2026, business leaders should view this as an opportunity to build trust and gain a competitive edge. Companies that demonstrate a strong commitment to ethical and responsible AI are more likely to attract and retain customers, talent, and investors.

Proactive compliance fosters innovation within ethical boundaries. By integrating responsible AI practices from the outset, businesses can design more robust, trustworthy, and socially beneficial AI solutions. This not only mitigates risks but also opens new avenues for market differentiation and sustainable growth. In a world increasingly wary of unchecked technological power, being a leader in responsible AI can become a powerful brand asset.

Conclusion: The Future of AI is Regulated, Be Prepared

The year 2026 marks a significant milestone in the journey of AI regulation in the U.S. The patchwork of existing laws, executive orders, and proposed legislation is rapidly converging into a more defined regulatory landscape. For business leaders, the message is clear: ignore the impending AI Regulations 2026 at your peril. The potential for substantial fines, reputational damage, and legal challenges is real and growing.

However, this is not merely a burden; it is an opportunity. By proactively establishing robust AI governance frameworks, prioritizing data integrity, ensuring transparency, and committing to ethical principles, businesses can not only achieve compliance but also build deeper trust with their stakeholders. This strategic foresight will not only protect your organization from penalties but will also position it as a responsible innovator, ready to harness the transformative power of AI for good. The future of AI is regulated, and those who prepare now will be the ones who lead.